Top 17 Most-Asked Manual Testing Interview Questions

Harshit Paul

Posted On: October 24, 2018

![]() 18560 Views

18560 Views

![]() 6 Min Read

6 Min Read

Preparing for an interview as a manual tester? Here is a bit of help. For some technical edge, it is obviously useful to have an idea about what most interviewers ask. I have prepared a short list of the most commonly asked manual testing interview questions, along with their answers. Let’s jump right in!

1. Advantages of black box testing

- Testing from the end user’s point of view.

- No knowledge of programming languages required for testing.

- Identifying functional issues in the system.

- Mutual independence in tester’s and developer’s work.

- Possible to design test cases as soon as specifications are complete.

2. Statement coverage

White box testing involves the use of a metric called statement coverage to ensure testing of every statement in the program at least once.

It is calculated as:

Statement Coverage = No. of Statements Tested / Total no. of Statements

It helps by:

- Verification of code correctness.

- Determining flow of control.

- Measuring code quality.

3. Bug life cycle

A bug life cycle has the following phases:

- NEW or OPEN, when the bug is found by a tester.

- REJECTED, if the project manager finds the bug invalid.

- POSTPONED, if the bug is valid but not in the scope of the current release.

- DUPLICATE, if the tester knows a similar bug that has already been raised.

- IN-PROGRESS, when the bug is assigned to a developer.

- FIXED, when the developer has fixed the bug.

- CLOSED, if the tester retests the code and the bug has been resolved.

- RE-OPENED, if the test case fails again.

4. Agile testing

Agile testing involves an iterative and incremental testing process for adaptability and customer satisfaction by rapid delivery of the product. The product is broken down into incremental builds which are delivered iteratively.

To explore Agile further, check out our hub featuring Agile Interview Questions.

5. Monkey testing

In monkey testing, the tester enters random input to check if it leads to a system crash. Monkey testing involves Smart Monkey and Dumb Monkey.

While a Smart Monkey is used to find stress by carrying out load testing and stress testing, its development is expensive.

Dumb monkeys, on the other hand, are for elementary testing. They help in finding the most severe bugs at low cost.

6. Verification and validation

Verification |

Validation |

| A static process of verifying documents, code, design and programs, without code execution. | The dynamic process of testing the actual product by executing code. |

| Methods used are inspections, reviews, walkthroughs, desk-checking, etc. | Methods used are black box testing, gray box testing, white box testing, etc. |

| It checks whether the software conforms to specifications. | It tests whether the software meets client requirements. |

| Target objects are requirements specification, software architecture, design, database schema, etc. | Target object is a unit module, several modules or the complete product. |

| Performed by the QA team. | Performed by the testers. |

7. Baseline testing

Baseline testing involves running test cases to analyze software performance. The feedback collected after baseline testing is used to set a benchmark for future tests by comparing current performance with previous results.

8. Retesting and regression testing

Retesting |

Regression Testing |

| Verifying whether a previous defect has been fixed. | Verifying whether a recent bug-fix has led some other components to work incorrectly. |

| Specifically testing the fixed bug. | Testing all components possibly affected by the fixed bug. |

| Test cases that failed earlier. | Test cases that passed in previous builds. |

9. Severity and priority of bugs with examples

Priority defines the importance of a bug from a business point of view, while severity is the extent to which it is affecting the application’s functionality.

Examples:

- Error in displaying company logo: high priority, low severity.

- A rare test case leading to system crash: low priority, high severity.

- Failure of online payments: high priority, high severity.

- Grammatical error in an alert box: low priority, low severity.

10. Alpha and beta testing

Alpha Testing |

Beta Testing |

| Performed by in-house developers. | Performed by end-users. |

| Finding bugs and issues before product release. | A release of a beta version to end users for obtaining feedback. |

| Occurs in a lab environment. | Occurs in a real-world environment. |

11. Test driver and test stub

A test driver is a dummy software component which calls the tested module with dummy inputs during bottom-up testing.

A test stub is a dummy software component which is called by the tested module to receive the produced output during top-down testing.

12. Need for test strategy

Test strategy is an official, finalized document containing the testing methods, plan and test cases

It is needed for:

- Understanding the testing process.

- Reviewing the test plan.

- Identifying roles, responsibility.

- Early identification of possible testing issues to be resolved.

13. Error guessing and error seeding

Both are methods of test case design. In error guessing, the tester guesses the possible errors that might occur in the system and design the test cases to catch these errors. Error seeding, on the other hand, involves the intentional addition of known faults to estimate the rate of detection and the number of remaining errors.

14. Benchmark testing

In benchmark testing, the application performance is compared to the accepted industry standard. It is different from baseline testing because while baseline testing is intended to improve application performance with each version, benchmarking detects where the performance stands with respect to others in the industry.

15. Cyclomatic complexity

Cyclomatic complexity is a measure of the application’s complexity calculated from its control flow graph. This graph consists of:

Nodes

Each statement of the program.

Edges

A connection between two nodes to represent the flow of control.

16. Inspection in software testing

Inspection is a verification process which is more formalized than walkthroughs. The inspection team has 3-8 members including a moderator, a reader and a recorder. The target is usually a document such as requirements specification or a test plan, and the intention is to find flaws and lacks in the document. The result is a written report.

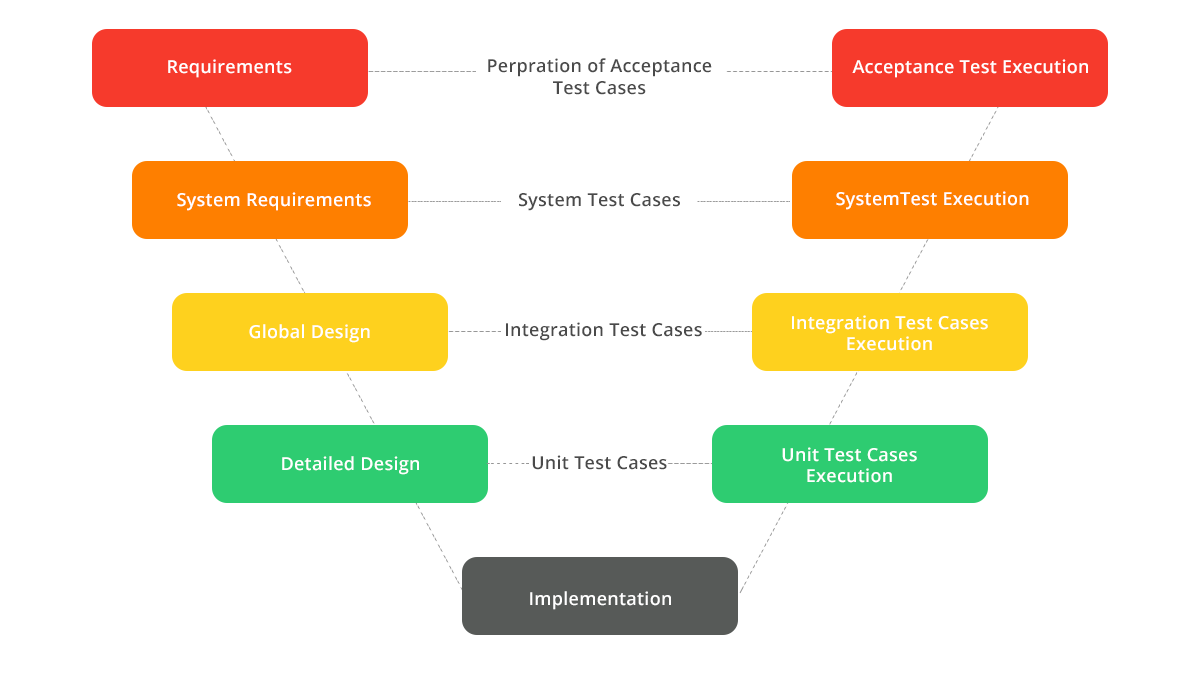

17. V model in manual testing

V model is an enhanced waterfall model of software lifecycle wherein the linear downward phases take an upward V-turn after the implementation stage, ensuring that every corresponding phase of waterfall lifecycle undergoes testing.

Finally

While you cannot know exactly what the interviewer may ask you about, the top 13 questions described above are the most common ones. Hope they help!

Got Questions? Drop them on LambdaTest Community. Visit now