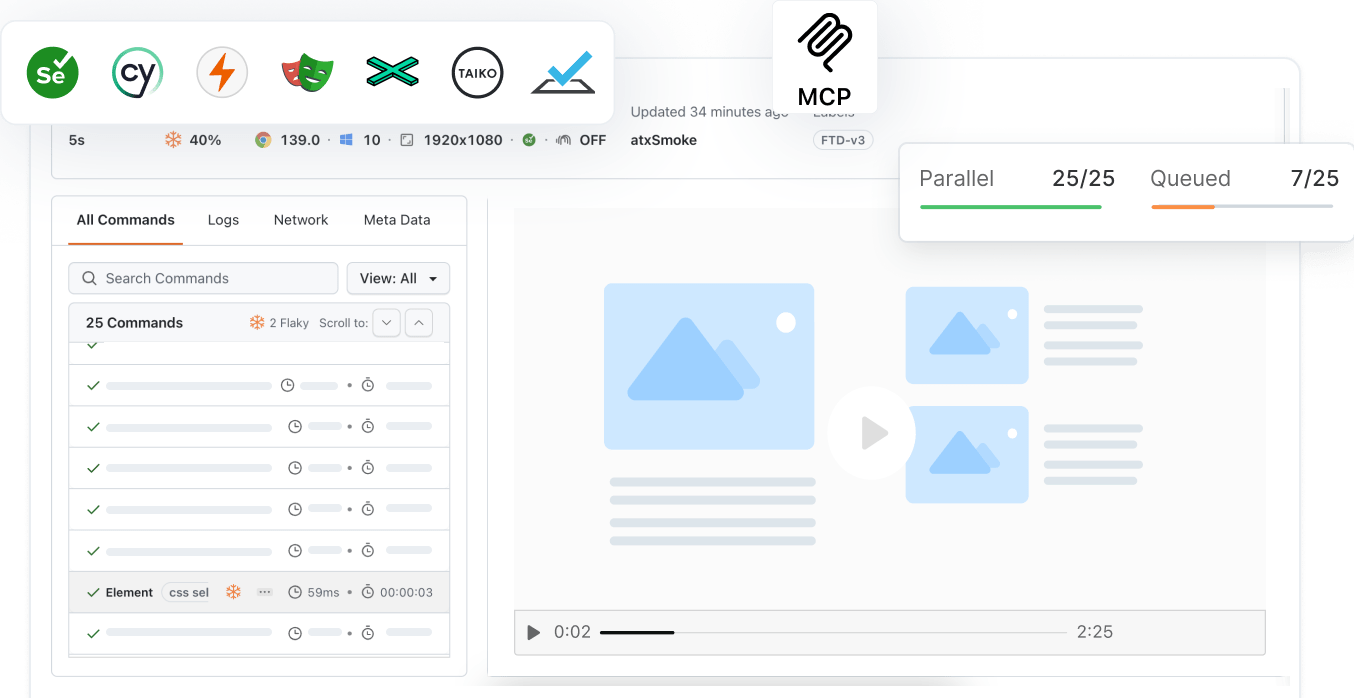

Scale Your Automation Testing with AI

Run end-to-end parallel tests & reduce test execution time by 5x

Generate tests scripts using natural language with KaneAI

Accelerate your testing process with Autoheal, SmartWait & RCA

- Testing Basics

- Home

- /

- Learning Hub

- /

- Top 100+ Data Structure Interview Questions [2024]

Top 100+ Data Structure Interview Questions [2024]

Learn 100+ data structure interview questions for 2024, covering arrays, linked lists, trees, heaps, and more, with detailed explanations and examples.

Last Modified on: November 25, 2025

- Share:

- Testing Framework Interview Questions

- Testing Types Interview Questions

- General Interview Questions

- CI/CD Tools Interview Questions

- Programming Languages Interview Questions

- Development Framework Interview Questions

- Automation Tool Interview Questions

OVERVIEW

Data structures are essential for organizing and manipulating data effectively, and mastering them is crucial for technical interviews and software development roles. Understanding data structures is key to problem-solving, algorithm design, and optimizing performance in coding.

This guide provides the top 100+ data structure interview questions and answers for 2024, designed to help you prepare thoroughly and confidently for your technical interviews. By familiarizing yourself with these questions, you will be better equipped to demonstrate your expertise and secure your desired role in the tech industry.

Download Data Structure Interview Questions

Note: We have compiled all Data Structure Interview Question for you in a template format. Feel free to comment on it. Check it out now!

Freshers-Level Data Structure Interview Questions

Here, you will learn some of the fundamental data structure interview questions that are commonly asked of freshers. These questions test your understanding of data structure concepts, core functionalities, and basic operations on various data structures.

1. What Is an Array?

An array is indeed a linear data structure where elements are stored in sequential order and are of the same data type. These elements are placed in contiguous memory locations, which allows efficient access to each element using an index.

2. How Do You Declare an Array?

To declare an array, you need to specify the array's name, the type of its elements, and, optionally, the number of elements it can hold.

Basic Syntax:

type-specifier declarator[constant-expression];- type-specifier: Specifies the data type of the array elements (e.g., int, float, char).

- declarator: Names the array.

- constant-expression: Specifies the number of elements in the array. It must be an integer value greater than zero.

Examples:

int numbers[10]; // Declares an array named 'numbers' that can hold 10 integers.3. Can an Array Be Resized at Runtime?

In certain programming languages, arrays can resize dynamically, whereas in others, like C, the array size is fixed.

4. How Would You Find the Smallest and Largest Element in an Array?

To find the smallest and largest elements in an array, the array is traversed once, and each element is compared with the current smallest and largest values. During the traversal, the smallest and largest values are updated whenever an element smaller or larger than the current one is encountered. This efficient method requires only a single pass through the array to identify the smallest and largest elements.

5. Difference Between Array and Object

Arrays and objects are essential in programming, each serving distinct purposes for data storage and manipulation. It is one of the most frequently asked data structure interview questions.

| Characteristic | Array | Object |

|---|---|---|

| Purpose | Stores multiple items of the same type. | Represents a single entity with properties and methods. |

| Structure | Ordered collection of elements. | Collection of key-value pairs (properties). |

| Access | Using numeric indices. | Using property names. |

| Size | Fixed length | Dynamic (properties can be added/removed). |

| Type of content | Homogeneous (same data type). | Heterogeneous (different data types). |

| Iteration | Easy to iterate with loops. | Requires specific methods (e.g., Object.keys()). |

| Built-in methods | Limited (e.g., length). | Many (inherited from Object prototype). |

| Usage | When dealing with lists of similar items. | When representing complex entities or structures. |

6. Explain Multi-dimensional Array

A multi-dimensional array is an array of arrays that enables the storage of homogeneous data in a tabular format. It extends the concept of a one-dimensional array into multiple dimensions. For instance, a two-dimensional array resembles a matrix with rows and columns, while a three-dimensional array can be visualized as a stack of matrices.

The size of these arrays is determined by multiplying the sizes of all dimensions, and they are stored in row-major order in memory. It's a common topic, and most asked questions during data structure interview questions.

7. Explain a Jagged Array

A jagged array, also known as a ragged array or an array of arrays, is a multidimensional array where the nested arrays can have varying lengths. Unlike regular multidimensional arrays, which have a fixed size for each dimension, jagged arrays provide flexibility in organizing and storing data.

In a jagged array, the main array contains references to other arrays, and these sub-arrays can differ in length, creating an irregular or "jagged" structure.

For example, consider a school system where each grade has a different number of classes, and each class has a different number of students. A jagged array can efficiently represent this structure:

[

[Class1A, Class1B, Class1C],

[Class2A, Class2B],

[Class3A, Class3B, Class3C, Class3D]

]In this case, the main array represents grades, and each nested array represents the classes within that grade. Jagged arrays are commonly implemented in languages like Java, C#, and Python. It's a common topic and the most asked question during data structure interview questions.

Note: Run tests across 3000+ real devices, browsers, and OS combinations. Try LambdaTest Now!

8. What Is a Tree?

One of the most frequently asked data structure interview questions is about trees. To better answer this, you can explain that a tree is a hierarchical data structure used to efficiently organize data. It consists of a root node (the main node), structural nodes, and child nodes, all connected by edges.

Each node can have zero or more child nodes, and the tree is often visualized like a natural tree, with the root as the base and child nodes acting as branches and leaves. Trees are widely used in scenarios requiring hierarchical organization, such as file systems, databases, and decision trees.

9. How Would You Reverse an Array In-Place in Linear Time and Constant Space?

One of the most frequently asked data structure interview questions is about reversing an array. To address this, you can explain that an effective way to reverse an array in place while maintaining linear time complexity and constant space usage is by using the two-pointer technique. This approach involves swapping elements: exchange the element at the start pointer with the element at the end pointer. After each swap, move the pointers towards each other and continue this process until the entire array is reversed.

The following C# implementation demonstrates this approach:

static void reverseArray(int[] arr, int start, int end)

{

int temp;

while (start < end) {

temp = arr[start];

arr[start] = arr[end];

arr[end] = temp;

start++;

end--;

}

}10. What Is the Difference Between an Array and a List?

Arrays and lists are fundamental data structures used for storing collections of elements, but they differ in their flexibility and usage, and it is the most common topic in data structure interview questions.

Here's a table comparing the key differences between arrays and lists:

| Characteristic | Array | List |

|---|---|---|

| Size | Fixed-size | Dynamic (can grow or shrink). |

| Memory allocation | Contiguous | It can be non-contiguous. |

| Element access | Constant time O(1). | Varies (often O(1) for ArrayList, O(n) for LinkedList). |

| Insertion/Deletion | Inefficient, especially in the middle. | Efficient, especially for LinkedList. |

| Type of elements | Homogeneous (same data type). | It can be heterogeneous (different data types). |

| Memory efficiency | More efficient | Less efficient due to overhead. |

| Multidimensional | Easily implemented. | Possible but more complex. |

| Implementation | The built-in data structure in most languages. | Often implemented as a class/abstract data type. |

| Use case | When size is known and fixed. | When frequent insertions/deletions or unknown size. |

11. Discuss the Advantages and Disadvantages of Using Arrays

Some of the advantages of arrays are mentioned below:

- Code Optimization: Efficient storage and retrieval with concise code.

- Functionality: Supports algorithms for searching, sorting, etc.

- Index-Based Access: Quick element access using an index.

- Multi-Dimensional: Manages complex data structures like matrices.

- Memory Allocation: Consecutive storage minimizes overhead.

- Foundational Use: Basis for other data structures like stacks and queues.

Some of the disadvantages of arrays are mentioned below:

- Size Limitation: Fixed size; cannot be changed once set.

- Difficulty in Expansion: Inefficient to resize; requires copying data.

- Memory Inefficiency: Wastes memory if the allocated size exceeds the actual need.

- Type Restriction: Stores only one type of data.

- Insertion/Deletion Challenges: Cumbersome to add/remove elements; requires shifting.

- Memory Consumption: Often allocated larger than needed.

- Lack of Index Checking: No automatic index bounds checking in some languages, leading to potential errors.

12. Explain Sparse Array

This is one of the most common topics in data structures and is frequently asked during data structure interview questions. A sparse array, or sparse matrix, is a data structure where most elements have a default value, such as zero or null.

Characteristics of Sparse Arrays:

- Most elements in a sparse array share a common value, usually zero or null.

- The non-zero or non-null elements are significantly fewer than the default-value elements.

- Element indices in sparse arrays may not be continuous or start from zero.

- Sparse arrays are efficient when dealing with data that has many default values, as they save memory and computational resources.

13. What Is the Time Complexity for Performing Basic Operations in an Array?

It is one of the most frequently asked topics in data structure interview questions because it relates to time complexity. It’s essential to understand the time complexity of operations when writing code for any feature to ensure efficient performance.

| Operation | Time Complexity |

|---|---|

| Access | O(1) |

| Insertion at end | O(1) |

| Insertion at beginning | O(n) |

| Insertion at a specific index | O(n) |

| Deletion at end | O(1) |

| Deletion at beginning | O(n) |

| Deletion at a specific index | O(n) |

| Search (unsorted array) | O(n) |

| Search (sorted array) | O(log n) |

| Sort (comparison-based) | O(n log n) |

14. Difference Between Array and ArrayList

While there isn’t a huge difference between an Array and an ArrayList, developers need to understand their subtle distinctions. These differences help determine when to use each data structure for optimal performance, and this question is frequently asked during data structure interview questions.

| Characteristic | Array | ArrayList |

|---|---|---|

| Type | Built-in language feature. | Class in java.util package. |

| Size | Fixed-size | Dynamic size. |

| Performance | Generally faster. | Slightly slower due to additional features. |

| Multidimensional | Easily implemented. | Requires nesting. |

| Methods | Limited (e.g., clone(), length). | Many utility methods (e.g., add(), remove(), clear()). |

| Syntax | int[] arr = new int[5]; | ArrayList<Integer> list = new ArrayList<>(); |

| Memory | Continuous memory allocation. | Non-continuous memory allocation. |

| Resizing | Not possible. | Automatic (doubles when full). |

| Interface | Does not implement any interface. | Implements List interface. |

| Generics | Not supported | Supported |

15. What Is a Linked List?

A linked list is a linear data structure comprising a sequence of nodes that are connected through pointers in languages like C or C++ or through references in languages such as Java, Python, and JavaScript.

Each node holds data and a pointer or reference to the subsequent node in the sequence. Unlike arrays, linked lists enable efficient insertion or removal of elements at any position within the list since the nodes are stored non-contiguously in memory.

16. What Are the Different Types of Linked Lists?

There are five types of LinkedList commonly referenced in data structure interview questions:

- Singly Linked List

- Doubly Linked List

- Circular Linked List

- Circular Doubly Linked List

- Header Linked List

17. Why Use Linked Lists?

Linked Lists are preferred for their efficient insertion and deletion operations. Unlike arrays, where inserting or deleting in the middle takes O(n) time, linked lists allow these operations in O(1) time by adjusting pointers. They are ideal for implementing queues, deques, stacks, and other data structures due to their simplicity. Additionally, linked lists are more space-efficient when the number of elements is unknown in advance, avoiding the memory reallocation required by dynamic arrays.

18. How Do You Remove a Particular Element From an Array?

Since arrays have fixed sizes, it’s not possible to directly remove elements from them. The best approach is to create a new array. To achieve this, you can copy the elements from the original array into the new one, omitting the element you wish to remove.

19. Are Linked Lists Considered Linear or Non-linear Data Structures?

Linked lists are generally considered linear data structures because their elements are arranged sequentially, and each element points to the next. They are not typically classified as non-linear, even in different applications, since traversal follows a linear path.

The distinction between linear and non-linear data structures is a crucial topic, and it's one of the most frequently asked questions during data structure interview questions.

20. Why Insertion/Deletion Are Faster in a Linked List?

Insertion and deletion are faster in a linked list because they only require adjusting the pointers (or references) of the surrounding nodes, whereas, in an array, elements must be shifted to maintain the sequential order. This makes linked lists more efficient for these operations, especially when compared to arrays where shifting elements can be time-consuming and this question is often asked during data structure interview questions.

21. How Do You Search for a Target Key in a Linked List?

To search for a target key in a linked list, you indeed perform a sequential (or linear) search. Starting from the head of the linked list, you check each node's value against the target key. If a match is found, the search stops; otherwise, it continues to the next node until the target is found or you reach the end of the list.

This is the typical search process for linked lists due to their linear structure.

The data structure interview questions covered above are fundamental and essential for any fresher to know, as they form the basic foundation of understanding data structures and their operations. Mastering these basics is crucial for building a strong data structures skill set and performing well in interviews.

As you progress, you will learn intermediate-level data structure interview questions to deepen your knowledge and enhance your expertise. This will help you tackle more complex topics and scenarios and advance your skills in the field.

Intermediate-Level Data Structure Interview Questions

These data structure interview questions cover advanced topics and are ideal for candidates with some experience in data structures. They are designed to test your ability to handle complex scenarios and optimize operations, helping you further enhance your skills.

22. Write a Program to Remove Duplicates From a Sorted Array in Place, Ensuring That the Relative Order of the Elements Is Maintained

Input: {2, 2, 3, 3, 3, 4, 4, 5, 5, 5, 6}

Output: {2, 3, 4, 5, 6}

Explanation:The array contains duplicates of 2, 3, 4, and 5. After removing duplicates, we're left with one instance of each unique element, maintaining their relative order.

public class RemoveDuplicates {

public static int removeDuplicates(int[] nums) {

if (nums == null || nums.length == 0) {

return 0;

}

int i = 0;

for (int j = 1; j < nums.length; j++) {

if (nums[j] != nums[i]) {

i++;

nums[i] = nums[j];

}

}

return i + 1;

}

public static void main(String[] args) {

int[] arr = {2, 2, 3, 3, 3, 4, 4, 5, 5, 5, 6};

int newLength = removeDuplicates(arr);

System.out.print("Output: {");

for (int i = 0; i < newLength; i++) {

System.out.print(arr[i]);

if (i < newLength - 1) {

System.out.print(", ");

}

}

System.out.println("}");

}

}Output:

Output: {2, 3, 4, 5, 6}23. Write a Function to Find the Number of Subarrays Whose Product Is Less Than a Given Integer K

Input: arr[] = [10, 5, 2, 6], K = 100

Output:8

Explanation: The subarrays satisfying the condition are [10], [5], [2], [6], [10, 5], [5, 2], [2, 6], [5, 2, 6]. The product of each of these subarrays is less than 100.

public class SubarrayProductCount {

public static int countSubarrays(int[] nums, int k) {

if (k <= 1) return 0;

int count = 0;

int product = 1;

int left = 0;

for (int right = 0; right < nums.length; right++) {

product *= nums[right];

while (product >= k) {

product /= nums[left];

left++;

}

count += right - left + 1;

}

return count;

}

public static void main(String[] args) {

int[] arr = {10, 5, 2, 6};

int k = 100;

int result = countSubarrays(arr, k);

System.out.println("Number of subarrays: " + result);

}

}Output:

Number of subarrays: 824. Find the Subsequence of Length 3 With the Highest Product From a Sequence of Non-negative Integers, With the Elements in Increasing Order

Input:arr[] = {2, 4, 1, 7, 3, 8, 5}

Output: 280

Explanation:Increasing subsequences of size three are

{2, 4, 7} => product 247 = 56

{2, 4, 8} => product 248 = 64

{2, 7, 8} => product 278 = 112

{4, 7, 8} => product 478 = 224

{3, 5, 8} => product 358 = 120

Maximum product: 224

import java.util.Arrays;

public class MaxProductSubsequence {

public static int findMaxProduct(int[] arr) {

int n = arr.length;

if (n < 3) {

return 0;

}

Arrays.sort(arr);

int maxProduct = 0;

int[] maxSubsequence = new int[3];

for (int i = 0; i < n - 2; i++) {

for (int j = i + 1; j < n - 1; j++) {

for (int k = j + 1; k < n; k++) {

int product = arr[i] * arr[j] * arr[k];

if (product > maxProduct) {

maxProduct = product;

maxSubsequence[0] = arr[i];

maxSubsequence[1] = arr[j];

maxSubsequence[2] = arr[k];

}

}

}

}

System.out.printf("Subsequence with max product: {%d, %d, %d}

",

maxSubsequence[0], maxSubsequence[1], maxSubsequence[2]);

return maxProduct;

}

public static void main(String[] args) {

int[] arr = {2, 4, 1, 7, 3, 8, 5};

int result = findMaxProduct(arr);

System.out.println("Maximum product of increasing subsequence of length 3: " + result);

}

}Output:

Subsequence with max product: {5, 7, 8}

Maximum product of increasing subsequence of length 3: 28025. What Are the Disadvantages of Using Linked Lists?

Linked lists are a commonly used data structure in computer science and are frequently discussed in data structure interview questions, but like any other, they come with certain drawbacks.

Some key disadvantages include:

- Slow Access Time: Accessing elements in a linked list can be time-consuming, as you must traverse the list to find the desired element, an O(n) operation. This makes linked lists less suitable for scenarios requiring quick access to elements.

- Pointers or References: Linked lists rely on pointers or references to connect nodes, which can add complexity compared to arrays. This complexity can make linked lists harder to understand, debug, and maintain.

- Higher Overhead: Linked lists involve higher overhead compared to arrays since each node requires additional memory to store a reference to the next node.

- Cache Inefficiency: Linked lists are inefficient in terms of cache usage because the memory is non-contiguous. As you traverse a linked list, cache misses are more likely, resulting in slower performance.

26. What Is the Time Complexity of Linked List Operations?

The time complexity of linked list operations varies: accessing an element or searching for a value takes O(n) time, while inserting or deleting elements at the beginning or end takes O(1) time. This is a common Data Structures interview question asked to test your understanding of the performance characteristics of linked lists.

| Operation | Time Complexity |

|---|---|

| Insertion at Beginning | O(1) |

| Insertion at End | O(n) |

| Deletion at beginning | O(1) |

| Deletion at End | O(n) |

| Searching in the Linked list | O(n) |

27. How Would You Compare Dynamic Arrays vs Linked Lists?

One of the most commonly asked data structure interview questions involves comparing different data structures. Let’s explore the key differences between these two data structures:

| Aspect | Dynamic Arrays | Linked Lists |

|---|---|---|

| Memory allocation | Contiguous | Non-contiguous |

| Size | Flexible, can grow or shrink. | Flexible and grows with each element. |

| Access time | O(1) - Constant time | O(n) - Linear time |

| Insertion/Deletion at beginning | O(n) - Linear time | O(1) - Constant time |

| Insertion/Deletion at the end | O(1) amortized | O(n) for singly linked, O(1) for doubly linked with tail pointer. |

| Memory overhead | Low | Higher (due to storing pointers). |

| Cache performance | Better (contiguous memory). | Poorer (scattered memory). |

| Implementation complexity | Simpler | More complex. |

| Suitable for | Random access, fixed size operations. | Frequent insertions/deletions, unknown size. |

28. Write a Function to Sort a Linked List That Contains Only 0s, 1s, and 2s

Input: 2 -> 0 -> 1 -> 2 -> 1 -> 0 -> NULL

Output:0 -> 0 -> 1 -> 1 -> 2 -> 2 -> NULL

Explanation:The function should rearrange the nodes so that all 0s come first, followed by all 1s, and then all 2s, while maintaining the stability of the original order within each group.

class ListNode {

int val;

ListNode next;

ListNode(int val) {

this.val = val;

this.next = null;

}

}

public class Sort012LinkedList {

public static ListNode sortList(ListNode head) {

if (head == null || head.next == null) {

return head;

}

ListNode zeroHead = new ListNode(0);

ListNode oneHead = new ListNode(0);

ListNode twoHead = new ListNode(0);

ListNode zero = zeroHead, one = oneHead, two = twoHead;

ListNode current = head;

while (current != null) {

if (current.val == 0) {

zero.next = current;

zero = zero.next;

} else if (current.val == 1) {

one.next = current;

one = one.next;

} else {

two.next = current;

two = two.next;

}

current = current.next;

}

zero.next = (oneHead.next != null) ? oneHead.next : twoHead.next;

one.next = twoHead.next;

two.next = null;

return zeroHead.next;

}

public static void printList(ListNode head) {

ListNode current = head;

while (current != null) {

System.out.print(current.val + " -> ");

current = current.next;

}

System.out.println("NULL");

}

public static void main(String[] args) {

ListNode head = new ListNode(2);

head.next = new ListNode(0);

head.next.next = new ListNode(1);

head.next.next.next = new ListNode(2);

head.next.next.next.next = new ListNode(1);

head.next.next.next.next.next = new ListNode(0);

System.out.println("Original list:");

printList(head);

head = sortList(head);

System.out.println("Sorted list:");

printList(head);

}

}Output:

Original list:

2 -> 0 -> 1 -> 2 -> 1 -> 0 -> NULL

Sorted list:

0 -> 0 -> 1 -> 1 -> 2 -> 2 -> NULL29. Implement the Quicksort Algorithm for Sorting a Doubly Linked List

Input: 7 <-> 3 <-> 9 <-> 1 <-> 5 <-> 8 <-> 2 <-> 6 <-> 4

Output: 1 <-> 2 <-> 3 <-> 4 <-> 5 <-> 6 <-> 7 <-> 8 <-> 9

Explanation:The function should sort the doubly linked list in ascending order using the Quicksort algorithm. Quicksort is chosen for its average-case time complexity of O(n log n) and its ability to sort in place.

class Node {

int data;

Node prev, next;

public Node(int data) {

this.data = data;

this.prev = this.next = null;

}

}

public class QuicksortDoublyLinkedList {

Node head, tail;

public void addNode(int data) {

Node newNode = new Node(data);

if (head == null) {

head = tail = newNode;

} else {

tail.next = newNode;

newNode.prev = tail;

tail = newNode;

}

}

public void printList() {

Node current = head;

while (current != null) {

System.out.print(current.data + " <-> ");

current = current.next;

}

System.out.println("NULL");

}

Node partition(Node low, Node high) {

int pivot = high.data;

Node i = low.prev;

for (Node j = low; j != high; j = j.next) {

if (j.data <= pivot) {

i = (i == null) ? low : i.next;

int temp = i.data;

i.data = j.data;

j.data = temp;

}

}

i = (i == null) ? low : i.next;

int temp = i.data;

i.data = high.data;

high.data = temp;

return i;

}

void quickSort(Node low, Node high) {

if (high != null && low != high && low != high.next) {

Node pivot = partition(low, high);

quickSort(low, pivot.prev);

quickSort(pivot.next, high);

}

}

public void sort() {

Node last = head;

while (last.next != null) {

last = last.next;

}

quickSort(head, last);

}

public static void main(String[] args) {

QuicksortDoublyLinkedList list = new QuicksortDoublyLinkedList();

list.addNode(7);

list.addNode(3);

list.addNode(9);

list.addNode(1);

list.addNode(5);

list.addNode(8);

list.addNode(2);

list.addNode(6);

list.addNode(4);

System.out.println("Original list:");

list.printList();

list.sort();

System.out.println("Sorted list:");

list.printList();

}

}Output:

Original list:

7 <-> 3 <-> 9 <-> 1 <-> 5 <-> 8 <-> 2 <-> 6 <-> 4 <-> NULL

Sorted list:

1 <-> 2 <-> 3 <-> 4 <-> 5 <-> 6 <-> 7 <-> 8 <-> 9 <-> NULL30. What Is a Stack?

A stack is a linear data structure where operations follow a specific order. This order can be LIFO (Last In, First Out) or FILO (First In, Last Out). LIFO means the most recently added element is removed first, while FILO means the first added element is removed last.

31. What Are the Operations Performed on a Stack?

To manipulate a stack, several operations are performed, which are often discussed in data structure interview questions:

- push(): Inserts an element into the stack.

- pop(): Removes an element from the stack.

- top(): Returns the top element of the stack.

- isEmpty(): Checks if the stack is empty and returns true if it is; otherwise, it is false.

- size(): Returns the current size of the stack.

32. How Is a Stack Implemented in an Array?

To implement stack in an array:

- Initialize an array as the stack.

- With the last element representing the top.

- Implement push to append to the end, pop to remove from the end, and peek to check the end, making sure to handle cases where the stack is empty or full.

33. What Is the Time Complexity of Stack Operations?

The time complexity for basic stack operations is O(1) for push, pop, and peek operations, as they involve constant time adjustments to the top of the stack. This efficiency is a key aspect often highlighted in data structure interview questions.

| Operation | Time Complexity | Description |

|---|---|---|

| Push | O(1) | Inserting an element at the top of the stack. |

| Pop | O(1) | Removing the top element from the stack. |

| Peek/Top | O(1) | Viewing the top element without removing it. |

| isEmpty | O(1) | Checking if the stack is empty. |

| Size | O(1) | Getting the current number of elements in the stack. |

| Search | O(n) | Finding a specific element in the stack. |

34. What Are the Applications of a Stack?

Stacks are crucial in managing function calls and recursion, where they maintain return addresses and local variables. They are also used for expression evaluation in postfix notation and syntax parsing in programming languages.

- Function Calls: They help maintain return addresses, ensuring the program returns to the correct point after a function call.

- Recursion: They manage local variables and return addresses for recursive function calls.

- Expression Evaluation: They are used to evaluate postfix expressions (Reverse Polish Notation).

- Syntax Parsing: They help in checking syntax correctness in programming languages and formal languages.

- Memory Management: They assist in memory allocation and management within some operating systems and programming environments.

35. What Is a Stack Overflow?

In computer programming, a stack overflow occurs when a program exceeds the stack's memory limit, a specialized area for managing function execution, local variables, parameters, and return addresses. Stacks follow a Last-In-First-Out (LIFO) principle, efficiently managing memory for function calls. However, if the stack is overused—such as by excessive local variable declaration or unbounded recursion—it can lead to a critical error known as a stack overflow.

However, the stack has a finite size, and exceeding this limit leads to a stack overflow. This can happen in two primary scenarios:

- Excessive local variable declaration: When a function creates an unusually large number of local variables or declares sizeable arrays or matrices, it may consume more stack space than available.

- Unbounded recursion: If a function calls itself repeatedly without a proper termination condition, each recursive call adds a new layer to the stack. Eventually, this can exhaust the available stack space.

36. Why Stack Is Considered a Recursive Data Structure?

The stack is a recursive data structure due to its self-referential definition. At any moment, a stack can be described as a top element and another stack (the remainder). The remaining portion forms a stack when an element is pushed or popped.

This self-referential property, where each operation breaks the problem into smaller instances of the same type, captures the essence of recursion.

37. What Is a Postfix Expression?

The standard method for writing mathematical expressions involves placing operators between operands, such as in "a + b," termed Infix expression. When the operator comes after the operands, for example, "a b +," this format is called Postfix expression or Reverse Polish notation.

38. What Is a Prefix Expression?

Prefix expressions, also called Polish notation, are a type of mathematical notation where the operator comes before its operands. In prefix notation, the operator precedes its operands. For example, the infix expression "a + b" is written as "+ a b" in prefix notation.

39. What Is the Difference Between a Stack and an Array?

Comparing a stack and an array is a common topic in data structure interview questions. The primary differences between a Stack and an Array include the method of data access, the types of data they support, the range of data access, the operations they permit, and the scenarios in which they are used.

Below are the differences between Stack and Array :

| Characteristic | Stack | Array |

|---|---|---|

| Data structure type | Abstract Data Type (ADT). | Concrete data structure. |

| Access pattern | LIFO (Last In First Out). | Random access. |

| Size | Dynamic | Fixed (in most languages). |

| Main operations | push(), pop(), peek() | Direct index access (arr[i]). |

| Memory allocation | Can be dynamic | Usually contiguous block. |

| Time complexity | O(1) for push/pop operations. | O(1) for index access. |

| Memory efficiency | It can be more efficient for dynamic size. | More efficient for fixed-size. |

| Use cases | Function call stack, undo operations. | Storing collections of similar items. |

40. Write a Code to Implement a Stack

There are two ways to implement a stack –

- Stack Implementation Using an Array

- Using Linked List

Stack Implementation Using an Array (Java)

public class StackArray {

private int maxSize;

private int top;

private int[] stack;

public StackArray(int size) {

maxSize = size;

stack = new int[maxSize];

top = -1;

}

public void push(int item) {

if (top < maxSize - 1) {

stack[++top] = item;

} else {

throw new StackOverflowError("Stack is full");

}

}

public int pop() {

if (isEmpty()) {

throw new IllegalStateException("Stack is empty");

}

return stack[top--];

}

public int peek() {

if (isEmpty()) {

throw new IllegalStateException("Stack is empty");

}

return stack[top];

}

public boolean isEmpty() {

return top == -1;

}

public int size() {

return top + 1;

}

public static void main(String[] args) {

StackArray stack = new StackArray(5);

stack.push(10);

stack.push(20);

System.out.println(stack.peek()); // Output: 20

System.out.println(stack.pop()); // Output: 20

System.out.println(stack.size()); // Output: 1

System.out.println(stack.isEmpty()); // Output: false

}

}

Stack Implementation Using a Linked List (Java)

public class StackLinkedList {

private Node top;

private int size;

private class Node {

int value;

Node next;

Node(int value) {

this.value = value;

}

}

public void push(int item) {

Node newNode = new Node(item);

newNode.next = top;

top = newNode;

size++;

}

public int pop() {

if (isEmpty()) {

throw new IllegalStateException("Stack is empty");

}

int value = top.value;

top = top.next;

size--;

return value;

}

public int peek() {

if (isEmpty()) {

throw new IllegalStateException("Stack is empty");

}

return top.value;

}

public boolean isEmpty() {

return top == null;

}

public int size() {

return size;

}

public static void main(String[] args) {

StackLinkedList stack = new StackLinkedList();

stack.push(10);

stack.push(20);

System.out.println(stack.peek()); // Output: 20

System.out.println(stack.pop()); // Output: 20

System.out.println(stack.size()); // Output: 1

System.out.println(stack.isEmpty()); // Output: false

}

}

Download Data Structure Interview Questions

Note: We have compiled all Data Structure Interview Question for you in a template format. Feel free to comment on it. Check it out now!

41. What Is a Queue?

A queue is a linear data structure with two open ends, operating on a First In, First Out (FIFO) principle. A queue is defined as a list where additions occur at one end (the back) while deletions are performed at the opposite end (the front). The first element added is also the first one to be removed.

42. What Is a Double-Ended Queue (Deque)?

Double Ended Queue is a linear data structure that supports insertion and deletion operations from both ends.

43. What Operations Can Be Performed on Queues?

The following operations are performed on queues :

- Enqueue: Adds an element to the rear of the queue.

- Dequeue: Removes and returns the front element.

- Peek / Front: Returns the front element without removing it.

- IsEmpty: Checks if the queue is empty.

- IsFull: Checks if the queue is full (for bounded queues).

44. What Are the Different Types of Queues?

There are three primary types of queues:

- Simple Queue or Linear Queue:

- Circular Queue:

- Priority Queue:

A linear queue operates on a First-In-First-Out (FIFO) basis, where elements are inserted at the rear end and removed from the front end. This queue type follows a strict order for insertion and deletion.

A circular queue improves upon the linear queue by connecting the end of the queue back to the beginning, forming a ring structure. This arrangement allows the queue to utilize space more efficiently.

In a priority queue, elements are arranged based on their priority levels. Each element has an associated priority, and those with the same priority are managed according to the FIFO principle. Priority queues are further classified into two types: ascending priority queues and descending priority queues.

45. What Is the Time Complexity of Enqueue and Dequeue Operations in a Queue?

In a queue, the time complexity of both enqueue and dequeue operations is O(1), making them highly efficient. This is a common data structure interview question asked to test your understanding of basic queue operations.

| Operation | Time Complexity |

|---|---|

| Enqueue | O(1) |

| Dequeue | O(1) |

46. What Is the Difference Between a Queue and a Stack?

A queue follows a First-In-First-Out (FIFO) principle, where elements are added at the rear and removed from the front, while a stack operates on a Last-In-First-Out (LIFO) principle, where elements are added and removed from the top.

This distinction is fundamental in data structure, and it's the common question asked in data structure interview questions to test your understanding.

| Characteristic | Queue | Stack |

|---|---|---|

| Principle | FIFO (First In, First Out) | LIFO (Last In, First Out) |

| Access point | Two ends (front and rear). | One end (top). |

| Implementation | Can use an array or linked list. | Can use an array or linked list. |

| Main operations | enqueue(), dequeue() | push(), pop() |

| Flexibility | Both ends are accessible. | Only one end is accessible. |

| Time complexity | O(1) for enqueue and dequeue. | O(1) for push and pop. |

| Visualization | Horizontal (like a line). | Vertical (like a stack of plates). |

| Usage examples | Breadth-first search, print queue. | Depth-first search, undo functionality. |

47. What Are the Advantages and Disadvantages of Using a Queue?

Advantages of using a Queue:

- Ensures fair processing of elements in the order they arrive.

- Easy to understand and implement.

- O(1) time complexity for basic operations (enqueue, dequeue).

- It can be used to manage memory in systems efficiently.

- Helps in decoupling components in producer-consumer scenarios.

- Useful in managing data flow between processes.

Disadvantages of using a Queue:

- Only the front element is directly accessible.

- Cannot easily access or manipulate elements in the middle.

- Fixed-size queues can lead to overflow issues.

- It may waste memory in fixed-size implementations.

- In single-threaded environments, this can lead to blocking on full/empty queues.

- Basic queues don't support priority-based processing.

- In circular queues, new elements may overwrite old ones if not managed properly.

48. What Is a Heap Data Structure and What Are the Two Types of Heaps?

Heap is a crucial topic in data structures and often appears in data structure interview questions. A heap is a complete binary tree that satisfies the heap property, where each node’s value is less than or equal to the values of its children. This structure is especially important when discussing priority queues, as heaps ensure that the smallest (or largest) element is always at the root.

There are two primary types of heaps:

- Min Heap: A Min heap is a particular type of binary heap in which the value of each node is always less than or equal to the values of its children. Min heaps are advantageous when the objective is to efficiently locate and extract the minimum element from a dataset.

- Max Heap: A Max heap, on the other hand, is a type of binary heap in which each node's value is greater than or equal to the values of its children. Max heaps are particularly useful for quickly locating and removing the maximum element from a collection.

49. What Is the Time Complexity of Inserting and Deleting an Element From a Heap?

In a heap, the time complexity for inserting and deleting an element is O(log n). This is because both operations require maintaining the heap property, which involves traversing the height of the tree; it is a key aspect of the data structure topic and is often asked during the data structure interview questions.

| Operation | Time Complexity | Description |

|---|---|---|

| Insertion | O(log n) | Adding a new element to the heap. |

| Deletion | O(log n) | Removing an element from the heap (typically the root). |

| Find Min/Max | O(1) | Finding the minimum (in a min-heap) or maximum (in a max-heap) element. |

| Build Heap | O(n) | Creating a heap from an unsorted array. |

50. What Is a Binary Heap?

A Binary Heap is a complete binary tree that maintains the heap property. In a max-heap, each parent node's value is greater than or equal to the values of its children, while in a min-heap, each parent node's value is less than or equal to the values of its children. This structure allows efficient retrieval of the maximum element in a max-heap or the minimum element in a min-heap.

51. What Is the Difference Between a Heap and a Binary Search Tree (BST)?

Since you have already reviewed heaps and binary search trees (BSTs), it's important to note that the main difference between them is that a BST does not allow duplicate elements, while a heap can contain duplicate values.

| Characteristic | Heap | Binary Search Tree (BST) |

|---|---|---|

| Purpose | Efficiently maintain min/max elements. | Efficient search, insertion, and deletion. |

| Ordering | Partial ordering (heap property). | Total ordering (left < root < right). |

| Balance | Always balanced | May become unbalanced |

| Root element | Min (min-heap) or max (max heap). | The middle value of sorted elements. |

| Duplicate values | Allowed | Depends on implementation (often not allowed). |

| Implementation | Often array-based. | Often node-based. |

| Space efficiency | More space-efficient. | Less space-efficient due to pointers. |

| Maximum element | O(1) in max heap, O(n) in min heap. | O(log n) (rightmost node). |

| Use cases | Priority queues, heapsort. | Dictionaries, symbol tables. |

52. How Do You Convert a BST Into a Heap?

To convert a Binary Search Tree (BST) into a Heap, follow these steps:

- Traverse the BST: Perform an in-order traversal of the BST to retrieve elements in sorted order.

- Build the Heap: Insert each element from the traversal into a new Heap. The Heap will automatically adjust itself to maintain the Heap property with each insertion.

This process ensures that the elements are restructured into a Heap while preserving the ordering from the BST.

53. How Do You Merge Two Heaps?

To merge two heaps, you can follow:

- Combine Elements: Merge all elements from both heaps into a single array. This is done by appending the elements of the second heap to the first heap.

- Heapify: Apply the heapify algorithm to the combined array. This process involves rearranging the elements to satisfy the heap property (either min-heap or max-heap) and can be efficiently done in linear time.

54. What Is the Difference Between a Heap and a Priority Queue?

The difference between a heap and a priority queue lies in their implementation and usage. A heap is a specific tree-based data structure used to implement a priority queue, which abstracts the heap operations to offer efficient priority-based retrieval. This distinction is often asked in data structure interview questions to test your understanding of these fundamental concepts.

Below is the table explaining the key differences between a heap and a priority queue:

| Characteristic | Heap | Priority Queue |

|---|---|---|

| Implementation | Concrete implementation. | It can be implemented using various data structures. |

| Structure | Tree-based structure. | No specific structure (depends on implementation). |

| Main operations | Insert, delete max/min. | Enqueue, dequeue, the highest priority. |

| Access to elements | Only root (max/min) is directly accessible. | Highest priority element accessible. |

| Types | Min heap, max heap. | It can have various priority schemes. |

| Memory usage | Generally more memory-efficient. | Depends on implementation. |

| Use case | Implementing priority queues, heapsort | Task scheduling, Dijkstra's algorithm |

55. What Is a Hash Data Structure?

A Hash data structure is a data structure that stores information consisting of two primary components: a key and a value. It can be implemented using an associative array, with the mapping efficiency depending on the hash function's effectiveness.

56. What Is a Hash Table?

A Hash table is a data structure designed for the quick insertion, lookup, and removal of key-value pairs. It works on the principle of hashing, where a hash function translates each key into a unique index in an array, which then serves as the storage location for the corresponding value. Simply put, it maps keys to values.

57. What Is a Hash Function?

This is often asked in data structure interview questions. A function that converts keys into array indices is called a hash function. An effective hash function evenly distributes the keys across the array, minimizing collisions and ensuring fast lookup times.

- Integer Universe Assumption: Assumes keys are integers within a defined range, simplifying hashing techniques.

- Division Hashing: Uses the remainder of the key divided by the array's size to determine the index. It is effective when the array size is prime, and the keys are well-distributed.

- Multiplication Hashing: Involves multiplying the key by a constant and using the fractional part to find the index. This method is also effective with well-distributed keys.

58. What Is the Difference Between Hashing and Hash Tables?

Hashing converts data into a fixed-size value (hash value), which helps in efficient data handling. A hash table is a data structure that uses hashing to store and quickly retrieve data based on these hash values. This distinction is often asked in data structure interview questions to test your understanding of these data structure concepts.

| Aspect | Hashing | Hash Tables |

|---|---|---|

| Definition | A technique for converting a key into an index for storage. | A data structure that uses hashing to store key-value pairs. |

| Purpose | To generate an index to access data quickly. | To organize data for efficient retrieval, insertion, and deletion. |

| Focus | The algorithm or method used to map keys to indices. | The overall data structure implementing the hashing technique. |

| Function | Uses functions like division or multiplication to create indices. | Uses hash functions to manage storage and retrieval of key-value pairs. |

| Example | Hash functions like division hashing or multiplication hashing. | A hash table implements a hash function to manage a set of key-value pairs. |

| Data Structure | It's not a data structure itself; it's a concept used within data structures. | A concrete data structure that uses hashing to function effectively. |

| Efficiency | Depends on how well the hash function distributes keys. | Efficiency depends on the hash function and handling of collisions. |

59. What Is a Collision?

A collision in a hash data structure occurs when two or more distinct keys hash to the same index in the hash table.

60. What Are the Different Techniques for Collision Resolution?

The following are the two techniques for collision resolution:

- Separate Chaining:In this approach, a linked list is formed for each slot where a collision takes place, and the new key is added to this list. The technique is known as separate chaining because it uses chains of linked lists to handle collisions. This method is especially useful when the number of keys to be managed is not fixed.

- Open Addressing: Open addressing is used to handle collisions in a hash table by resolving them within the table itself. This technique ensures that all keys are stored within the hash table and that the table’s size is always greater than the number of keys. It is also referred to as closed hashing.

61. What Is the Complexity of Hash Table?

The time complexity of hash table operations such as insertion, deletion, and lookup is typically O(1) on average due to efficient hash function performance. However, in cases of high collision rates, the complexity can degrade to O(n). This topic in data structure is a key aspect, and hence, it's the most frequently asked question in the data structure interview questions.

| Operation | Average Case | Worst Case |

|---|---|---|

| Insert | O(1) | O(n) |

| Delete | O(1) | O(n) |

| Search | O(1) | O(n) |

| Space Complexity | O(n) | O(n) |

62. Explain in Simple Terms How Hash Tables Are Implemented

Hash tables are implemented using an array of "buckets" where data is stored. When you input data, a hash function converts the key associated with that data into an index, which determines where in the array the data will go. If two keys produce the same index (a collision), the hash table will store multiple items in the same bucket, usually by chaining them together in a list.

This way, when you want to retrieve data, you use the same hash function to quickly find the correct bucket and then search within it if needed.

63. What Is MD5?

MD5, which stands for Message Digest algorithm 5, is a widely known cryptographic hash function. Developed by Ronald Rivest in 1991, MD5 was designed to enhance its predecessor, MD4, and provide improved security features.

The primary function of MD5 is to take an input message of any length and produce a fixed-size output, known as a hash or digest. This output is always 128 bits long, equivalent to 16 bytes. The fixed-length output is a crucial characteristic of hash functions, allowing for consistent processing and storage of hash values regardless of the input size.

64. How to Choose Between a Hash Table and a Trie (Prefix Tree)?

Choosing between a hash table and a trie depends on your use case: hash tables are ideal for fast key-value lookups, while tries are better for prefix matching and ordered data. This is a common topic in data structure interview questions, highlighting their distinct advantages for different scenarios.

Nature of the Data:

- Hash Table: Best suited for arbitrary key-value pairs.

- Trie: Ideal for string keys or sequential data, such as prefix searches.

Operations Needed:

- Hash Table: Excels in single key lookups, insertions, and deletions.

- Trie: Superior for prefix matching, autocomplete, and lexicographical ordering.

Memory Usage:

- Hash Table: Generally more memory-efficient for diverse or sparse key sets.

- Trie: More efficient for sets of keys with common prefixes, though it may use more memory overall.

Time Complexity:

- Hash Table: Average case O(1) for basic operations.

- Trie: O(k), where k is the length of the key, can be advantageous for string-related operations.

Implementation Complexity:

- Hash Table: Simpler to implement and understand.

- Trie: More complex to implement, particularly with advanced optimizations.

65. What Is Chaining?

Chaining is a collision resolution technique used in hash tables where each bucket contains a linked list (or another data structure). When multiple keys hash to the same index, they are stored in this list, allowing multiple entries to be handled at the same index.

66. What Is Separate Chaining?

Separate chaining is indeed a technique used for collision resolution in hash tables. It involves maintaining a linked list (or another data structure) for each slot in the hash table array. When multiple elements hash to the same index, they are appended to the list associated with that slot.

This approach allows the hash table to handle collisions by storing multiple entries that share the same hash value in a chain. The search operation involves traversing the linked list to find the element with the desired key.

67. What Is Cuckoo Hashing and Its Key Aspects?

Cuckoo hashing is a collision resolution technique that uses two (or more) hash functions and two (or more) hash tables. When a key collides in one hash table, it displaces an existing key, which is then rehashed using the second hash function and moved to the second hash table. This process ensures that each key has a fixed number of potential locations and helps maintain efficient lookups and insertions by keeping the hash tables relatively balanced.

The key aspects of cuckoo hashing include:

- Two Hash Functions: It uses multiple hash functions to distribute keys across different hash tables.

- Displacement Mechanism: When a key collides, it displaces an existing key, which is then rehashed to another table.

- Guaranteed Lookup Time: Cuckoo hashing ensures O(1) average-time complexity for lookups.

This method helps maintain a low load factor and ensures that the average time complexity for operations remains efficient.

68. What Is the Load Factor of a Hash Table?

The load factor of a hash table is indeed defined as the ratio of the number of keys (or entries) to the total number of slots (or buckets) in the hash table. It is calculated as:

Load Factor= Number of Slots/ Number of Slots

A higher load factor indicates that the hash table is more full, which can increase the likelihood of collisions, while a lower load factor indicates that the hash table has more empty slots, potentially reducing collisions but increasing memory usage.

The intermediate-level data structure interview questions listed above are designed to help both beginners and those with some experience prepare effectively for interviews. As you progress further, you will encounter more challenging data structure interview questions that are particularly relevant for experienced professionals.

Advanced-Level Data Structure Interview Questions

Here, the focus shifts to advanced topics essential for experienced data structure professionals. By exploring these advanced data structure interview questions, you will gain a comprehensive understanding of complex data structures and algorithms, equipping you to handle intricate data management and optimization scenarios effectively.

69. What Is an ArrayIndexOutOfBounds Exception?

The ArrayIndexOutOfBoundsException in Java occurs when an attempt is made to access an index of an array that is outside the valid range (0 to n-1, where n is the array's length). This exception ensures that bounds checking is performed automatically, unlike in languages like C or C++, which do not perform automatic bounds checking and may lead to undefined behavior if an out-of-bounds index is accessed.

Let’s understand this with a simple example:

public class ArrayIndexOutOfBoundsExample {

public static void main(String[] args) {

int[] numbers = {1, 2, 3, 4, 5};

System.out.println("Array length: " + numbers.length);

// Accessing valid index

System.out.println("Element at index 2: " + numbers[2]);

// This will throw an ArrayIndexOutOfBoundsException

System.out.println("Element at index 5: " + numbers[5]);

// The following line won't be executed due to the exception

}

}Expected Output:

Array length: 5

Element at index 2: 3

Original Output:

Array length: 5

Element at index 2: 3

ERROR!

Exception in thread "main" java.lang.ArrayIndexOutOfBoundsException: Index 5 out of bounds for length 5

at ArrayIndexOutOfBoundsExample.main(ArrayIndexOutOfBoundsExample.java:11)70. Explain Different Types of Trees

This is the most crucial topic in data structure and most often appears in Data structure interview questions. A tree structure where each node has up to two children, with variations such as full, complete, balanced, and degenerate trees. At the same time, binary trees where each node's left children contain smaller values, and the right children contain larger values, and likewise, there are other types of trees as well that are highlighted below:

- Binary Tree: A binary tree is a data structure where each node has at most two children, known as the left and right child. There are several subtypes:

- Full Binary Tree: Every node has either 0 or 2 children.

- Complete Binary Tree: All levels are filled except possibly the last, which is filled from left to right.

- Balanced Binary Tree: The height difference between the left and right subtrees is minimal.

- Degenerate (Pathological) Binary Tree: Each parent node has only one child, resulting in a structure similar to a linked list.

- Binary Search Tree (BST): A binary search tree (BST) is a binary tree where the value of each node in the left subtree is less than the value of the root, and the value of each node in the right subtree is greater. While all BSTs are binary trees, not all binary trees are BSTs due to this specific ordering property.

- AVL Tree: An AVL tree is a self-balancing binary search tree. It maintains balance by ensuring that the height difference between the left and right subtrees of any node is no more than one.

- B-Tree: A B-tree is a self-balancing search tree where each node can contain multiple keys and have multiple children. This structure reduces the tree height and improves disk access performance by allowing more keys to be stored per node and having more than two children per node.

71. What Are the Basic Operations Performed on a Tree?

The basic operations performed on a tree are:

- Insertion: Add a new node to the tree while maintaining its structural and ordering rules.

- Deletion: Remove a node from the tree while ensuring the tree’s overall structure and properties remain intact.

- Traversal: Visit each node in the tree exactly once, following a specific order such as preorder, inorder, or postorder.

- Searching: Locate a specific node in the tree based on given criteria and its value.

72. What Is a Leaf Node?

A leaf node is a node in a tree data structure that does not have any child nodes.

73. What Is a Root Node?

The root node is the first or topmost node in a tree structure. Each tree contains exactly one root node, which is unique in that it has not been connected to any other node prior to its position.

74. What Is the Red-Black Tree Data Structure?

A Red-Black Tree is a type of self-balancing binary search tree in which each node is assigned a color—either red or black. The main goal of a Red-Black Tree is to keep the tree balanced during insertions and deletions, which facilitates efficient data retrieval and manipulation.

The properties of a Red-Black Tree are:

- Node Color: Each node is either red or black.

- Root Property: The root node is always black.

- Red Property: No two red nodes can be adjacent (i.e., a red node cannot have a red child).

- Black Property: Every path from a node to its descendant leaf nodes contains the same number of black nodes.

- Leaf Property: All leaf nodes (NIL nodes) are black.

75. What Are the Advantages and Disadvantages of Using Trees?

Advantages of tree data structures:

- Trees naturally represent hierarchical relationships, making them ideal for file systems, organization charts, and XML/HTML parsing.

- Trees can grow or shrink easily, unlike arrays with fixed sizes.

- Trees like Binary Search Trees (BST) maintain a natural ordering of elements, which is useful for sorting and range queries.

Disadvantages of tree data structures:

- Implementing and maintaining tree structures, especially balanced ones, can be complex.

- Due to pointer storage, trees use more memory than arrays or linked lists.

- Keeping trees balanced (for optimal performance) requires additional operations and complexity.

76. What Is a Binary Search Tree?

A Binary Search Tree is a data structure that organizes and stores data in a sorted order. Each node can have up to two children: a left child with values less than the node’s value and a right child with values greater than the node’s value. This hierarchical arrangement facilitates efficient searching, insertion, and deletion operations.

77. How Do Self-Balancing Trees Like AVL or Red-Black Trees Work?

Self-balancing trees, such as AVL trees and Red-Black trees, maintain balanced heights to ensure efficient search, insertion, and deletion operations. They do this by automatically adjusting their structure using specific rules:

- AVL Trees: Ensure that the heights of the two child subtrees of any node differ by no more than one. They use rotations to restore balance whenever an insertion or deletion causes an imbalance.

- Red-Black Trees: Maintain balance through a set of color-based rules (red and black nodes) and rotations. They ensure that no path from the root to a leaf is more than twice as long as any other such path, which helps keep the tree balanced.

These balancing techniques ensure that operations like search, insertion, and deletion remain efficient.

78. Describe the Different Tree Traversal Methods (Preorder, Inorder, Postorder)

Preorder Traversal:

- Order: Root -> Left -> Right

- Process:

- Visit the root node.

- Recursively traverse the left subtree.

- Recursively traverse the right subtree.

- Order: Left -> Root -> Right

- Process:

- Recursively traverse the left subtree.

- Visit the root node.

- Recursively traverse the right subtree.

- Order: Left -> Right -> Root

- Process:

- Recursively traverse the left subtree.

- Recursively traverse the right subtree.

- Visit the root node.

Inorder Traversal:

Postorder Traversal:

79. How Can You Convert a Binary Search Tree Into a Sorted Array?

To convert a binary search tree (BST) into a sorted array, you can perform an in-order traversal of the tree. This traversal visits nodes in ascending order, naturally producing a sorted sequence. As you traverse the BST, append each visited node's value to an array. This method effectively transforms the tree into a sorted array, reflecting the nodes' values in ascending order, making it a key aspect for the topic in data structure interview questions.

80. What Is a Minimum Spanning Tree?

A minimum spanning tree is a subgraph of a connected, undirected graph that includes every vertex and has the lowest possible total edge weight while connecting all nodes without creating any cycles. This concept is crucial in network routing and clustering algorithms and is a common question asked in data structure interview questions.

81. How Do You Find Out if Two Trees Are Identical?

Two binary trees are considered identical if they contain the same data in the same structure. To determine if the two trees are identical, compare the root nodes’ data and recursively check the left and right subtrees. This approach ensures both the data and structure match. This question is commonly asked in data structure interview questions.

You can use the following algorithm:

- Compare the data of the root nodes: Check if treeA's root data equals treeB's root data.

- Recursively check the left subtrees: Call the function identicalTree(treeA->left, treeB->left).

- Recursively check the right subtrees: Call the function identicalTree(treeA->right, treeB->right).

- Return true if all comparisons are true (i.e., both the data and subtrees match).

82. What Is an AVL Tree?

An AVL Tree is a height-balanced binary search tree where each node has a balance factor, calculated as the difference between the heights of the left and right subtrees. This balancing factor ensures that the tree remains balanced, with operations maintaining O(log n) time complexity. This concept frequently appears in data structure interview questions.

83. What Is a Graph?

A graph is a non-linear data structure made up of vertices and edges. Vertices are sometimes called nodes, and edges are the lines or arcs that link any two nodes within the graph. Formally, a graph is defined as a set of vertices V and a set of edges E and is represented as G(V, E).

84. Differentiate Between Directed and Undirected Graphs

In a directed graph, edges have a specific direction from one vertex to another, indicating a one-way relationship. In contrast, an undirected graph has edges with no direction, meaning the connection between vertices is bidirectional.

This topic of graphs is a major focus in data structure and often appears in data structure interview questions.

The following are the differences between directed and undirected graphs:

| Characteristic | Directed Graphs | Undirected Graphs |

|---|---|---|

| Representation | Arrows between nodes. | Simple lines between nodes. |

| Adjacency | It can be one-way. | Always two-way. |

| Edge Pairs | (A, B) ≠ (B, A) | (A, B) = (B, A) |

| Degree | In-degree and out-degree. | Single degree concept. |

| Traversal | It can have unreachable nodes. | All nodes are reachable if connected. |

| Cycles | It can have directed cycles. | Cycles are undirected. |

| Use Cases | Modeling one-way relationships (e.g., web links, flow charts). | Modeling mutual connections (e.g., social networks, road maps). |

85. Describe Common Graph Types

Here are some common graph types:

- Undirected Graph: Edges have no direction. If vertex A is connected to B, B is also connected to A.

- Directed Graph (Digraph): Edges have a direction. A can connect to B without B necessarily connecting to A.

- Directed Acyclic Graph (DAG): A directed graph with no cycles.

- Cyclic Graph: Contains at least one cycle (a path that starts and ends at the same vertex).

- Acyclic Graph: Contains no cycles.

- Complete Graph: Every vertex is directly connected to every other vertex.

86. Explain Topological Sorting and How It Can Be Implemented in a Directed Acyclic Graph (DAG)

Topological sorting of a Directed Acyclic Graph (DAG) arranges the vertices in a linear order such that for every directed edge (u, v), vertex u precedes vertex v. This ordering is only possible if the graph has no directed cycles.

The sorting can be implemented using two main approaches:

- Depth-First Search (DFS) Algorithm: Perform DFS traversal and push each vertex onto a stack. The topological order is obtained by popping vertices from the stack.

- Kahn's Algorithm: Use in-degrees to iteratively remove vertices with zero in-degrees and add them to the ordering, updating the in-degrees of their neighbors.

This topic is a key concept in data structure and frequently appears in data structure interview questions.

87. What Is a Linear Search Algorithm?

Linear Search is a technique for locating an element within a collection by inspecting each item sequentially. In this method, each element is checked one at a time until the desired element is found. This approach is also referred to as sequential search.

88. What Is a Binary Search Algorithm?

A Binary Search Algorithm is an algorithm for locating the position of a target value in a sorted array. The algorithm works by continually dividing the search range into two halves. It compares the target value with the middle element of the range and adjusts the search interval accordingly until the target is located or the range is empty.

89. Explain the Difference Between Linear and Binary Search

Linear search checks each element in a collection sequentially until the target is found, making it suitable for unsorted data with O(n) time complexity. In contrast, binary search efficiently finds an element by repeatedly dividing a sorted collection in half, achieving O(log n) time complexity but requiring sorted data. This topic often appears in data structure interview questions.

Here are the differences between linear search and binary search:

| Characteristic | Linear Search | Binary Search |

|---|---|---|

| Algorithm type | Sequential | Divide and conquer. |

| Data structure requirement | Unordered or ordered list. | Sorted list |

| Time complexity | O(n) | O(log n) |

| Best case scenario | O(1) (first element). | O(1) (middle element). |

| Worst case scenario | O(n) (last element or not found). | O(log n) |

| Space complexity | O(1) | O(1) for iterative, O(log n) for recursive. |

| Suitable for | Small datasets, unsorted lists. | Large, sorted datasets. |

| How it works | Check each element sequentially. | Repeatedly divides the search interval in half. |

90. What Is Bubble Sort and How Does It Work?

Bubble Sort is one of the most basic sorting algorithms. It works by repeatedly comparing and swapping adjacent elements if they are not in the correct order. Due to its high average and worst-case time complexity, Bubble Sort is unsuitable for sorting large datasets.

Here's how it works:

- The algorithm starts at the beginning of the array.

- It compares the first two elements. If the first is greater than the second, they are swapped.

- It then moves to the next pair of adjacent elements, compares them, and swaps if necessary.

- This process continues until the end of the array is reached.

- The algorithm then starts over from the beginning and repeats steps 2-4.

- Each complete pass through the array is called a "bubble," as larger elements "bubble up" to their correct positions at the end of the array.

- The algorithm continues making passes through the array until a pass is made with no swaps, indicating the array is fully sorted.

91. What Is Insertion Sort, and How Does It Differ From Selection Sort?

Insertion Sort works by taking elements one by one from the unsorted list and placing each into its correct position within the sorted portion, ensuring that the sorted part is always in order. It is stable and efficient for small or nearly sorted datasets.

Selection Sort, on the other hand, repeatedly selects the smallest (or largest) element from the unsorted portion of the list and swaps it with the first unsorted element, effectively growing the sorted portion from the start. Unlike Insertion Sort, Selection Sort is not stable, as it may change the relative order of equal elements. This topic about insertion and selection sort often appears in data structure interview questions.

92. How Does Merge Sort Work?

Merge Sort is a sorting algorithm that uses the divide-and-conquer strategy. It works by recursively splitting the input array into smaller segments, sorting these segments, and then merging them back together to form the sorted array.

To put it simply, Merge Sort divides the array into two parts, sorts each part individually, and then merges the sorted sections. This process repeats until the array is completely sorted.

Here’s how Merge Sort works, step by step:

- Divide: Recursively break down the array into two halves until the subarrays cannot be divided further.

- Conquer: Sort each of these subarrays individually using Merge Sort.

- Merge: Merge the sorted subarrays into a single sorted array, repeating the process until the entire array is sorted.

93. Explain the Shell Sort Algorithm

Shell Sort is an enhancement of Insertion Sort that allows elements to be swapped across larger gaps initially, which helps to reduce the number of shifts needed. By starting with a larger gap and progressively reducing it, the algorithm performs a series of Insertion Sorts on subarrays defined by the gap. This approach can significantly improve the efficiency of sorting compared to the standard Insertion Sort.

The time complexity of Shell Sort can vary depending on the gap sequence used, with best-known sequences resulting in complexities generally between O(n log2n) or O(n3/2).

94. How Does Quick Sort Work?

QuickSort is a key topic in data structure interview questions, and QuickSort is known for using the Divide and Conquer strategy. It selects an element as a pivot, partitions the array around this pivot, and places the pivot in its correct position within the sorted array.

Here are the steps of how quick sort works:

- Choose a Pivot: Select an element from the array to serve as the pivot. The choice of pivot can vary; common strategies include selecting the first element, the last element, the median, or using a random element.

- Partitioning: Rearrange the array so that all elements less than the pivot are on one side and all elements greater than the pivot are on the other side. The pivot ends up in its correct sorted position.

- Recursively Apply: Apply the same process recursively to the subarrays on either side of the pivot. This continues until the subarrays are small enough to be considered sorted.

- Combine: Since the array is sorted in place, no combining step is needed; the array is sorted once the recursive calls are complete.

Quick Sort has an average time complexity of O(n log n),but in the worst case (e.g., when the pivot is the smallest or largest element), it can degrade to O(n2).

95. How Does Depth-First Traversal(DFT) Work?

Depth-First Traversal (DFT) explores a graph or tree by starting at the root (or an arbitrary node) and proceeding as far as possible along each branch before backtracking. This is typically implemented using a stack data structure or recursion.

Below are the steps to start with DFT:

- Begin at the root node or any starting node.

- Visit the first unvisited adjacent node, mark it as visited, and continue this process recursively or by using a stack until you can no longer go deeper.

- Once all nodes along the current path are explored, backtrack to explore other unvisited paths.

- Continue this process until all nodes are visited.

DFT can be implemented using either an explicit stack or recursion, with both approaches effectively managing the traversal.

96. How Does Breadth-First Traversal Work?

Breadth-First Traversal (BFS) is a graph traversal algorithm that starts at a specified node and explores all its adjacent nodes first, then moves on to the next level of nodes. This process continues until all reachable nodes are visited.

How it works:

- Start at the root node or any chosen starting node.

- Explore all adjacent, unvisited nodes and mark them as visited.

- Add these nodes to a queue for subsequent exploration.

- Dequeue a node from the front of the queue, and repeat steps 2 and 3 for its unvisited neighbors.

- Continue this process until the queue is empty, indicating that all nodes have been visited.

This approach ensures that nodes are visited level by level, making BFS particularly useful for finding the shortest path in unweighted graphs. BFS is a crucial topic in data structures and is often asked questions during data structure interview questions.

97. What Is a Recursive Algorithm?

A recursive algorithm is a method of solving a problem by breaking it down into smaller instances of the same problem. It works by calling itself with a reduced input until it reaches a base case, which is a simple instance that can be solved directly.

Structure of a recursive algorithm:

- Base Case(s): The condition(s) under which the problem can be solved directly without further recursion.

- Recursive Case: The part of the algorithm where it calls itself with a simpler or smaller instance of the problem.