Cross Browser Testing Strategy Explained in Three Easy Steps

Deeksha Agarwal

Posted On: March 1, 2018

![]() 29044 Views

29044 Views

![]() 10 Min Read

10 Min Read

When you hear the term Cross Browser Testing what comes immediately to your mind? Something that decodes the literal meaning i.e. testing for cross-browsers or you can say testing an application across various browsers.

But it’s not as simple as it sounds.

Cross browser testing in itself is a whole different ecosystem and requires a lot of planning and prerequisites before you decide to go ahead with that.

In this post, I wanted to dig deeper into cross browser testing process focusing on efficient strategies using which you can cut down on the time and effort required for your high impact cross browser testing.

Objectives Of Cross Browser Testing

Before delving deeply into cross browser testing, we must be aware of what are the objectives we want to achieve through our testing process. Cross browser testing in summary serves the following two purposes:

- Discovering bugs: This involves breaking your application by various ways in order to find the bugs.

- Sanity Check: This involves making sure that your audience receives the same experience across various platforms.

I would say to meet your two objectives you can plan your testing in two ways.

One, cover most used browsers by your target audience first and test for outlier browsers later. For example, if I want to test for sanity check for US audience targeted product, I would test my website on latest versions of Chrome, Safari, and Firefox, and I’ll cover over 50% of my audience in these three browsers. Assuring compatibility across these three browsers alone assures me of the majority of the audience in US.

Two, if I want to find out bugs in my application I can just throw it to the most problematic browser and check for any bugs.

This is a general case but if my application is getting updated regularly then how I am gonna go for cross browser testing?

Well, in that case I can have one of the following three criteria.

- Ignore the bug

- Fix it and hope that the updates haven’t broken anything

- Fix it and test it again for all the browsers again.

The third case is the ideal case for any organization but doesn’t the process seem to be tiresome and lengthy?

Yes, it does. So let’s figure out what we can do to perform cross browser testing with less efforts such that it creates maximum impact on the application.

Cross Browser Testing In A Restaurant

Doesn’t this seem a bit of unusual? Well, no!

Let me explain how?

Consider you are a restaurant chain owner planning on to introduce a whole new dish into the market. You’ve been working on that for quite a long time and you don’t have a damn clue on what it is going to be like when it is gonna be released into the market.

You, of course, have put your heart and soul into it and you just want to deliver it with the utmost perfection. So. how is this gonna work out?

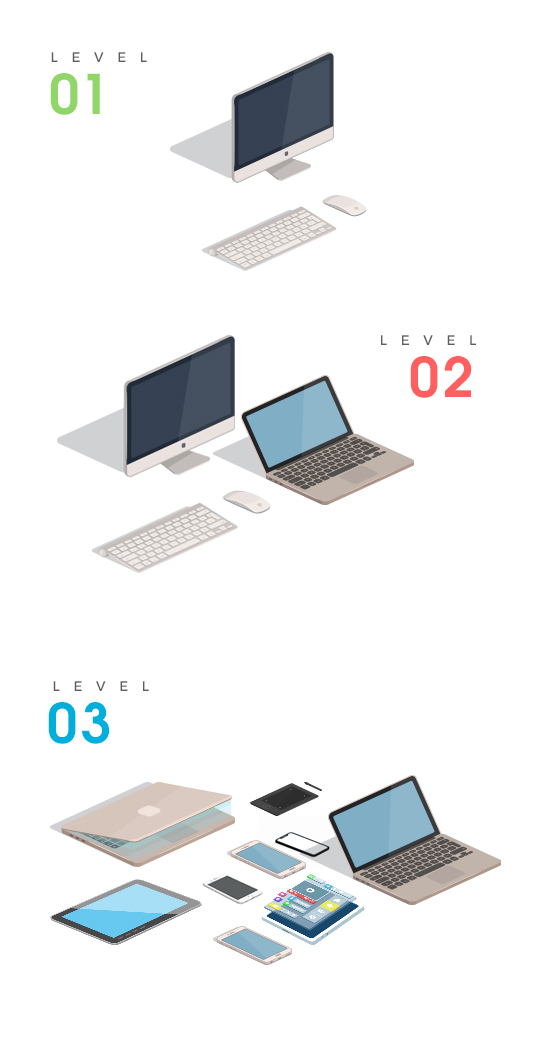

- Level 1: Development: You’ll prepare the dish and first check for yourself if everything is in place or not. Once you are satisfied with that you’ll go to the next level.

- Level 2: Controlled Testing: Variety of people have variety of tastes and suggestions. So, you’ll go for a quick variant check by trying in your local restaurant. You would test you new dish in an controlled environment, serve it to chosen set of customers, and get constant feedbacks to improve it further.

- Level 3: Testing in Production: Once you feel that different variants of people have accepted your dish then you can further expand it and add it to the full menu at your chain restaurant’s menu as well, one restaurant at a time. You still take regular feedbacks on the new dish though, finding ways to improve it.

Same is the case with cross browser testing. You put your heart and soul into developing your web app or website and again you want to deliver it with perfection. So in order to deliver your web app or website into the market you need to do through these three levels of cross browser testing. You start by developing the app itself with a specific purpose, target audience, and device type in mind.

Then for level 2, you test your app in an controlled environment on most readily available browsers, or on browsers that you largest percentage of audience is using. In final step, you test your app on all possible browsers your audience use to access your app, so that your web app or website works flawlessly for every platform it is opened.

Getting Started With Cross Browser Testing

Before deciding for performing cross browser testing you need to do a lil bit of homework on the user data, what is that is liked by the users in that specific area, what they uses the maximum, demographics study, and so on.

Assume if the dish that you’ve been trying to release is a non-veg dish and the users in that particular area are mostly vegetarians then there will be strong chance of poor performance.

Similarly, if the users in an area uses chrome by 85% majority then there is an affinity towards testing for its perfection on chrome. No matter what you cannot afford any bugs on chrome. So, you need to figure this out on highest priority. You can check out this infographic on some of the browser statistics that can help you in figuring out the mostly used browsers on mobile and desktops.

Also, analytics tool like google analytics are a great help in analyzing user behaviour especially in finding out what type of browsers your users are using to access your website. 😉

Once you are done with the data study, it’s time to move up to the three levels and reaching the third level is what we are aiming at.

The Three Levels In Cross Browser Testing

The First Level: Exploring Bugs

Wanna test the dish yourself first? Go to the first level.

Once you develop a website/ web app you need to test it for bugs on your local browser by running possible test scenarios which can cause your website to break like:

- Check for breakpoint

- Check for app behaviour with JavaScript turned off

- Check for zoom in and zoom out

- Check the website using only keyboard navigation

- Check for page load script

These bugs are browser specific as you are checking them in a specific browser and figuring out the possible defect scenarios in a specific browser.

You need to very careful while fixing a bug that you don’t introduce more bugs while fixing the already existing ones. So, after fixing you need to make sure of not introducing the bugs in the previous version so you have to again test it for all the cases.

The Second Level: Strategizing

Once done with the first level, you need to move to the second level which involves moving on to the variant testing. Now in this level you need to act smart, else everything is gonna messed up.

You have already identified browser specific bugs. It’s time to start testing it across different browsers.

Strategize your testing to take it to minimum effort, high impact level.

- Analysis of bug distributionClassify your browsers based upon the risk associated in three zones.

a) High risk browsers

b) Medium risk browsers

c) Low risk browsersNow analyze your bug based upon these three divisions of browsers. The major aim here is to cover all the bugs with fewer iterations of testing rounds.

Say, you have the following scenario where the bugs introduced in various browsers are denoted by ticks here.

Bug distribution

Bug distributionNow there are two ways: Either start from low risk browsers and go to high risk browsers by fixing bugs or start the other way around. Recall, our main aim is to cover all scenarios with fewer iterations. So, if we start fixing from high risk browsers by fixing Bug1, Bug 3, Bug 4, and Bug 5 then there may be a possibility that you don’t need to fix Bug 4 in medium risk browser.

When you fix bug 2 in medium risk browser, the tendency of bug 2 staying in low risk browser becomes negligible. So you need to fix 5 bugs and perform 6 rounds of testing on all the bugs.

‘Eliminating the bug in high risk browser automatically decreases it’s chances of being present in low risk browsers’.

So, you have to carefully analyze the bug.

- Identify problematic browsers: As I have already mentioned, fixing bugs in high risk browsers is what you need to start with so you need to figure out the most problematic browsers and make your code compatible with these browsers. This will decrease your efforts and less problematic browsers become easier for you to handle.

Now you may ask what is a high risk browser?

Well, the answer comes out to be really tricky depending upon the browser features that your application is using. Like:

If your Javascript uses ‘indexOf’ there is a chance that it may break in IE 8.

How to find which is a high risk browser for me?

The answer to this question is : You can use Can I Use tool to figure out browser compatibilities for various technologies.

The Third Level: Sanity Testing

Once you are done with finding and fixing bugs in the second level it’s time to finally move to “THE THIRD LEVEL’ i.e. sanity testing.

Testing at this level is like 80:20 rule. For me it goes like

‘80% of the browsers contains 20% of the bugs and the rest 80% of the bugs will show up in the remaining 20% of the browsers’.

So you need to now test those 20% of the bugs for finding and fixing those 80% of the bugs.

So where are you going to start?

Again, strategy and a bit of common sense plays Ace of Spades here.

Browser Prioritizing

Start testing by prioritizing the browsers based on the relevance of the browsers and then going on to the least relevant browser. Makes sense, right?

If your audience is using Safari the maximum then you need to test it for safari first. Then followed by the browsers which are the least used.

This is basically done in the descending order of market share.

What’s the point of testing your webapp on the browser which is being used by 0.05% of the population? So, your testing needs to make sense here.

Based on browser prioritizing decide for yourself, which browsers makes the most sense to you and which browsers makes the least sense.

Go back to level 2. Follow the same procedure based on bugs specific browsers on the basis of high, medium, and low risk browsers and get ready to see your dish getting appreciation from every single person.

This is how you can achieve a level of perfection for cross browser testing in minimal efforts creating high impact.

Got Questions? Drop them on LambdaTest Community. Visit now