AI-Empowered Software Testing [Spartans Summit 2024]

LambdaTest

Posted On: February 6, 2024

![]() 3646 Views

3646 Views

![]() 10 Min Read

10 Min Read

Since 2023, AI has dominated discussions, promising to revolutionize our lives. The software testing industry, too, has not been left behind, with many tools integrating AI features.

But the big question is, are these AI features truly game-changers for software testing? Can they empower testers to be more efficient and work faster?

To answer all of these questions, in this session of Spartans Summit 2024, Daniel Knott – Head of Software Testing, MaibornWolff GmbH, provides a comprehensive overview of the current AI features available for software testers. He distinguishes between features worth exploring and those merely serving for marketing purposes.

If you couldn’t catch all the sessions live, don’t worry! You can access the recordings conveniently by visiting the LambdaTest YouTube Channel.

Role of Generative AI

Daniel starts the session by discussing the significance of generative AI, pointing out that it has become a central point in the tech world. He references Business of Apps, highlighting that features such as voice assistants, chatbots, and facial recognition generated over $2.5 billion in 2022. Daniel notes the rapid growth of AI, especially in 2023, and its transformative potential in various industries.

✨AI is taking over the world by storm & the QA domain is also leveraging generative AI for a wide range of use cases! pic.twitter.com/LETSfAfk2j

— LambdaTest (@lambdatesting) February 6, 2024

He uses an example to show the impact of generative AI, particularly citing ChatGPT. He compares the time it took for Netflix to reach 1 million users (23.5 years) and ChatGPT (5 days) when it was launched in 2023. This contrast emphasizes the quick adoption and disruption caused by AI.

After that, Daniel discusses how, since 2023, AI has been a dominant topic, with new products and services developed regularly. He notes the increasing presence of AI in daily life, promising to make tasks easier, faster, and more productive. This sets the stage for the relevance of AI in the software testing industry and the subsequent challenges that testers may face.

Daniel mentions the challenges software testers encounter in the face of AI integration. One key challenge is the fear among testers that AI might replace their roles.

Drawing parallels with previous concerns about automation, Daniel reassures that AI should be viewed as an assistant rather than a replacement. He emphasizes the importance of critical thinking and human judgment in ensuring the success of software testing processes, even with AI integration.

AI can be empowering in the realm of software testing, but it still poses a few challenges for software testers. In his insightful talk, Daniel shares some helpful tips for overcoming AI challenges," pic.twitter.com/Ff7ibMljIr

— LambdaTest (@lambdatesting) February 6, 2024

Unveiling the AI Toolbox for Testers

Daniel talks about the hype surrounding AI, with new tools and services promising to optimize testing. He emphasizes the need to differentiate between the genuine value of tools and mere marketing buzz.

He then delves into the current state of AI features available to testers:

- Self-Healing Tests: This involves monitoring your codebase automatically and fixing flaky tests caused by changing labels or IDs. This translates to less time spent chasing down these issues and more focus on higher-level testing.

- AI-Powered Test Analytics: AI can help by generating summaries of test case health and execution results and predicting future trends. This empowers testers to make data-driven decisions and identify potential quality bottlenecks.

- Test Code Generation: If you are stuck writing repetitive test code, tools like ChatGPT can generate test scripts based on your prompts. However, Daniel is against blindly trusting the output. Treat it as a starting point, using your critical thinking to adapt and refine the code for your needs.

- Test Data Generation: Generating realistic test data can be a time-consuming process. AI can help by suggesting diverse data points. Remember that public AI models might not understand your specific context or data requirements. Consider using a private, organization-specific model for better results.

- Code Explanation: AI tools can automatically generate documentation for your code! This can be a huge time saver, but accuracy is paramount. Double-check the explanations to ensure they align with your code’s true functionality.

- Visual Checks: AI can be employed to generate graphics, which can be compared to the expected outcomes.

The Human Factor in AI Testing

Daniel feels the role of the human factor in AI testing is crucial. He discusses software testers’ challenges and says that AI should not be viewed as a replacement for human expertise but rather as an assistant.

Despite the advancements in AI, he highlights the irreplaceable aspects of human judgment, critical thinking, and the ability to discern nuanced testing scenarios. In addressing the fear among testers about being replaced by AI, Daniel reassures the audience that the future for software testers is bright, and AI should be seen as a tool to enhance efficiency rather than a threat to job security.

Increased Efficiency, assistance with complex tasks, and more job opportunities are some of the important outcomes of using #AI for software testing. pic.twitter.com/jy4jR22px7

— LambdaTest (@lambdatesting) February 6, 2024

He believes that the human element, with its critical thinking skills, is vital in judging the outcomes of AI in testing and providing valuable feedback to developers and stakeholders. By acknowledging the limitations of AI, such as the tendency of large language models to provide incorrect answers, Daniel advises testers not to trust AI output blindly and stresses the importance of human awareness and intervention.

Can AI Harm Software Testers?

According to Daniel – AI can be a powerful tool for software testers, it is essential to approach it but not blindly trust it. He acknowledges the potential for AI to bring positive advancements in testing but warns against falling into the same trap as with test automation.

He draws a parallel to the past, where test automation became a hype topic, and some organizations made the mistake of attempting to replace all testing with automation. Daniel suggests that similar misguided approaches might occur with AI. He advises against completely replacing testing with AI and encourages testers to be cautious.

To sum up, Daniel’s key message is that AI can assist and improve testing processes, but testers must stay informed, exercise critical thinking, and not entirely rely on AI. He emphasizes that testers are in a good position, and the human factor in testing remains vital. Checking the outputs and inputs of large language models becomes increasingly important, presenting a positive outlook for the future of software testing.

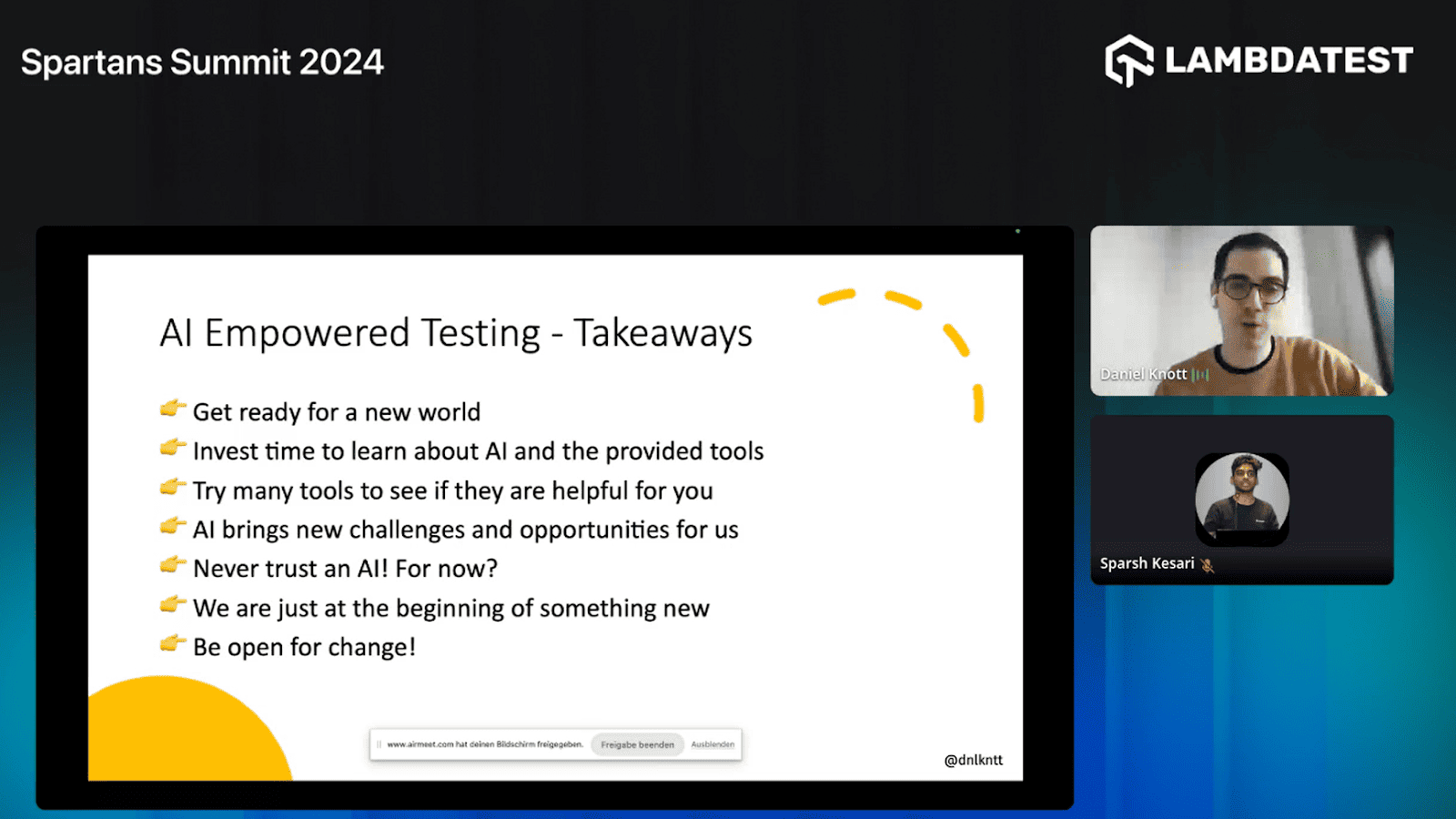

Key Takeaways

Here are some key takeaways from the Daniel’s session:

- Get Ready for Change: The industry is undergoing significant changes due to AI. Prepare for this transformation by investing time in learning about AI fundamentals.

- Invest in Learning Fundamentals: Rather than jumping directly into tools, spend time learning the basics of AI. Understand machine learning algorithms, how models are trained, and the foundations of large language models.

- Experiment with AI Tools: Try out various AI tools available in the market. Many vendors offer trial periods, allowing testers to explore and understand how these tools can be beneficial.

- Be Curious: Stay curious and open-minded about AI. Sign up for different tools, experiment with them, and see how they can address specific challenges or opportunities in your context.

- Continuous Learning: Recognize that AI brings new challenges and opportunities. Stay at the forefront of new technology and be proactive in learning how AI can impact your role as a software tester.

- Never Blindly Trust AI: While AI can assist in various tasks, Daniel emphasizes the importance of not blindly trusting AI. Critical thinking and validation are crucial to ensure accurate results.

- The Human Factor in Testing: Highlighting the significance of human testers, Daniel encourages testers to be aware of management or stakeholders proposing to replace testing with AI. Learn from past experiences with test automation and understand that AI should complement, not replace, testing.

- Recommended Reading: Daniel suggests exploring the recommended books on generative AI, AI automation, and other related topics to understand the field better.

- Visual Checks and AI: Daniel discusses the importance of UI design and how visual checks, aided by AI tools, can save time. AI can generate graphics and compare them to identify issues, boosting visual checks.

- Caution with Automation and AI: Drawing parallels with past experiences in test automation, Daniel advises against blindly replacing testing with AI. Testers are urged to be cautious and advocate for the effective integration of AI in testing processes.

- Bright Future for Testers: Despite the advancements in AI, Daniel expresses optimism about the future of testers. Checking the outputs of large language models becomes a crucial task, positioning testers well in the evolving landscape.

Q&A Session

Q. How well do AI-powered testing tools handle big projects or big companies?

Daniel: The importance of defining goals and tasks specific to your project. While AI can be used in projects of any size, it’s crucial to consider your testing process’s specific needs and challenges.

Q. Could you please give us the roadmap to transition into AI-based software testing, including recommended tools?

Daniel: Focus on learning the fundamentals of AI, understanding large language models (LMS), and evaluating tasks that could be optimized using AI tools. Experimenting with different tools and starting small experiments were also recommended.

Q. In the current market, a lot of AI tools are available to the community. How is testing for AI tools different compared to other tools and applications?

Daniel: Testing AI tools requires a different approach, often involving understanding the algorithms, neural networks, and data sets. Critical thinking is essential, and thorough code reviews, especially for changes made by developers, are recommended.

Q. Being in a continuous learning QA industry, how can we utilize AI and integrate it into our daily activities to make our tests more optimized and efficient?

Daniel: Continuous learners in the QA industry can leverage AI to optimize and streamline various testing activities. Identify repetitive or time-consuming tasks such as test case generation, test data management, or automation script creation, and explore how AI tools can enhance efficiency in these areas.

Q. Can a self-healing AI indicate changes made by a developer, such as altering an ID format, and notify that a correction is needed in the test script?

Daniel: While self-healing AI can automatically correct certain changes, it may not always detect developer-induced errors in ID formats. Blindly trusting self-healing AI without code reviews can lead to potential issues. Collaborative efforts between testers and developers and code reviews remain crucial for ensuring the accuracy of test scripts.

Q. What should we learn instead of test automation? Should we stop learning Selenium and concentrate on something else?

Daniel: Absolutely, staying updated with test automation tools like Selenium is crucial. However, it’s equally important to broaden your skill set. Consider delving into emerging technologies such as AI in testing. Don’t limit yourself; explore API testing, security testing, and performance testing.

Adaptability is key in our industry, so keep an open mind, implement change, and continuously upskill. AI is making waves, and incorporating it into your toolkit can bring new efficiencies to your testing practices. Remember, it’s not about abandoning what you know but expanding your capabilities for a more holistic approach to testing in the ever-evolving tech landscape.

If you have more questions, please feel free to drop them on the LambdaTest Community.

Got Questions? Drop them on LambdaTest Community. Visit now