Increasing Product Release Velocity by Debugging and Testing In Production

Kritika Murari

Posted On: April 29, 2021

![]() 17316 Views

17316 Views

![]() 11 Min Read

11 Min Read

What is the key to achieving sustainable and dramatic speed gains for your business? Product velocity! It’s important to stay on top of changes in your quality metrics, and to modify your processes (if needed) so that they reflect current reality. The pace of delivery will increase when you foster simple, automated processes for building great software. The faster you push into production, the sooner you can learn and adapt. Monitoring your build and release pipeline is an important part of those efforts. It helps you design better software, which in turn leads to improved product velocity. Moving fast takes a lot of practice, a lot of hard work, and a toolkit that can help you achieve this!

Here’s a secret sauce for all agile processes-

Always ship fast, always have a plan for what’s next, and never compromise on quality.

Easier said than done right?

Well that’s what we’re here for! We understand that the debugging process is tedious and annoying, but we will continuously work toward making your developer experience seamless. With this in mind, our Product & Growth Manager Harshit Paul got together with Idan Shatz, Developer Advocate at Ozcode to explain how both testing in production and production debugging can help expedite your product release velocity. In case you missed the power-packed webinar, let us look at the major highlights of the event.

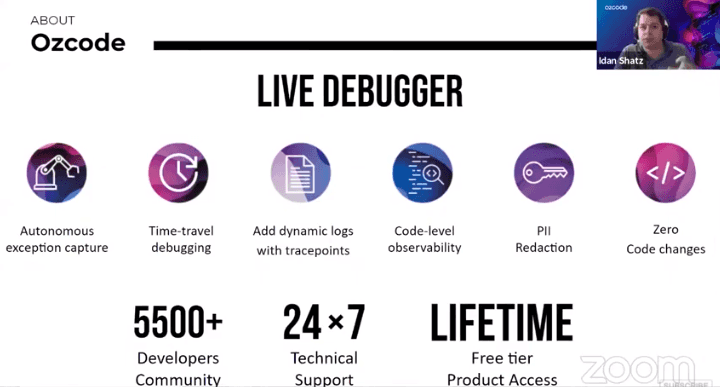

About Ozcode

Based out of Israel, Ozcode is simplifying and disrupting how developers debug issues across all C# and .NET applications. They have a super-talented team of engineers and their team leads with the visibility and insights needed to unleash productivity. The result – Improvement in software delivery at all stages of the SDLC by slashing debugging time to a great extent.

Here’s a little bit about what they do-

- Ozcode’s Production Debugger is the only data-driven debugging platform that puts production data in the hands of the developers so they can short-circuit the loop between finding errors in production and fixing them in code.

- Ozcode’s Visual Studio extension dramatically enhances your Visual Studio debugging experience enabling you to quickly find the root cause of bugs in .NET applications and fix them at a faster pace. Production Debugger has surely closed the gap between observability and debugging, providing unmatched capabilities to fix errors in QA, staging, and production environments.

- By providing code-level observability into running code at exactly the time and place where errors occur, Ozcode provides the insights needed to resolve errors quickly. This helps in shortening the release cycles.

- From Developers and team leads to QA professionals and DevOps/SREs to business executives, Ozcode establishes accountability and provides all stakeholders with the visibility needed to accelerate software delivery for C# and .NET.

About the Webinar

This insightful webinar was hosted by Harshit Paul- Product & Growth Manager at LambdaTest. Ozcode was represented by Idan Shatz, Developer Advocate. Idan Shatz is a Software Quality Evangelist with more than 20 years of experience in programming and software testing. Having served in roles ranging from CTO, VP of Product Development to Team Lead, Idan specializes in distributed testing environments and building developer tools.

Now that you know who the speakers are, it’s time to acquaint yourself with the ins and outs of the webinar.

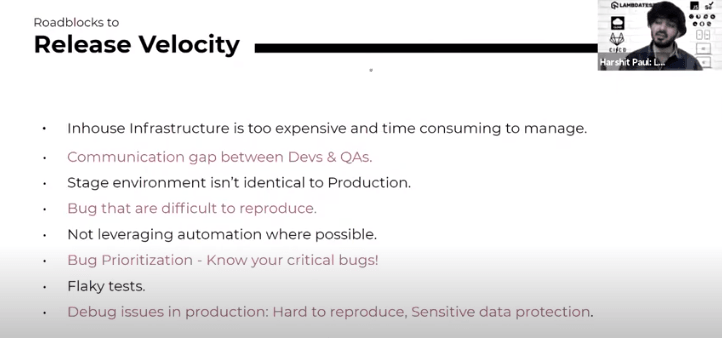

The session started off with Harshit and Idan highlighting the roadblocks to release velocity and the need to ensure the production and staging are mirroring and complementing each other. Together they explained the roadblocks like difficulty to reproduce and debug issues, lack of automation, flaky tests etc.

Roadblocks to Release Velocity

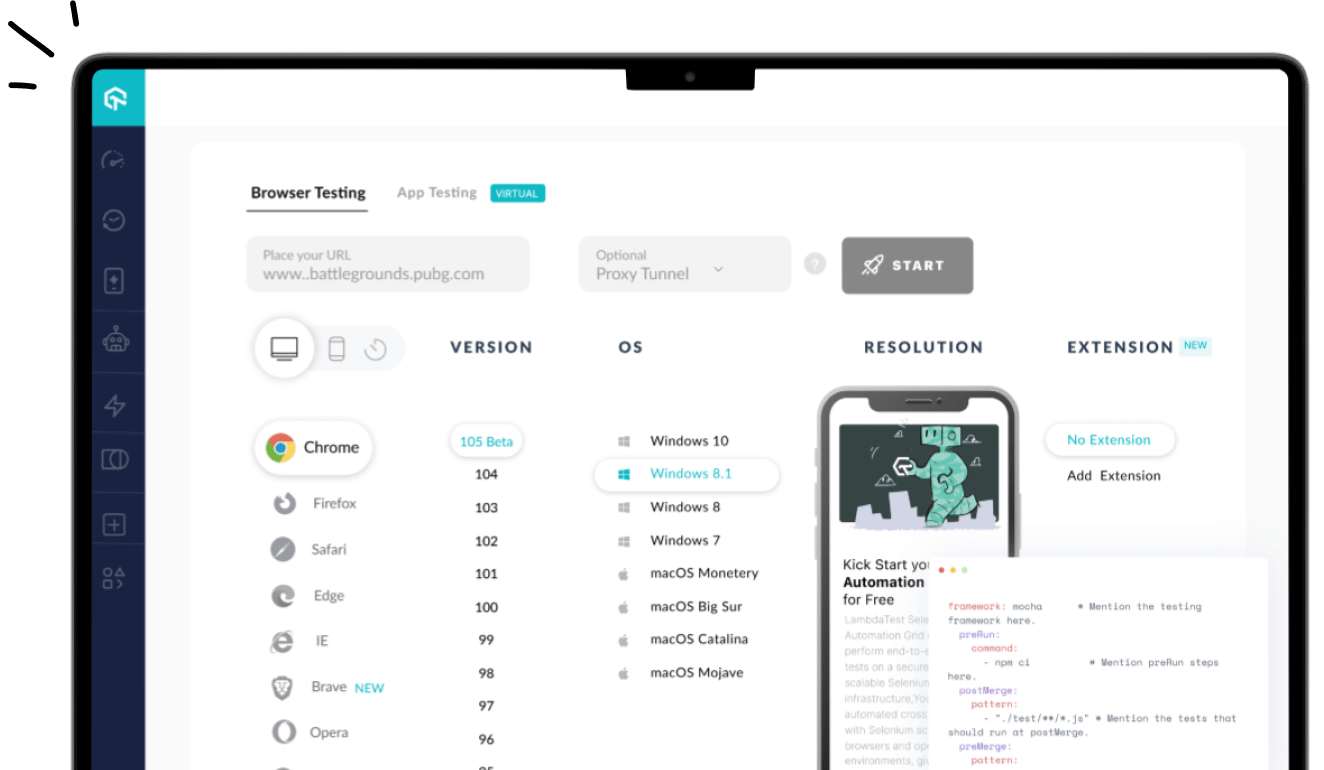

When it comes to cross browser testing, an in-house infrastructure is too time-consuming and expensive due to maintenance issues. There are so many devices that are being launched every month by different brands and your customers could come up to your website from any device or operating system or browser. You have to make sure that the setup is in place to give you good test coverage for your automation suites.

That’s not all. There are a number of roadblocks that might turn out to be a hindrance to your release cycles. Harshit listed them all out and entailed the why and how of each issue-

- Communication gap between Developers & QA Engineers.

- Difference between staging and production environments.

- Irreproducible bugs.

- Lack of automation.

- Lack of bug prioritization.

- Flaky tests.

- Debugging issues in production.

Idan also stressed upon the importance of proper communication between developers and QA engineers. It is best to avoid a back and forth situation where one reproduces a bug while the other might ignore it. It is essential to have good tools that would help QA deliver the information that Devs need in order to resolve the issue.

Furthermore, Idan goes on to talk about bugs that are considered to be ‘urban legends’ by a lot of companies since they are very hard to reproduce. This is a major limitation for your velocity!

How quickly can you reproduce the issues that happen in QA or to a user in production?

Harshit goes to explain how automation plays a major role in increasing your release velocity. If you’re not leveraging your automation testing suite or it is not as big as you would want it to be, it is going to slow down your entire release cycle. Every time you push something, it has to go through the same process of regression testing and this happens over & over, which not only takes more time but also reduces the tester’s efficiency.

In order to avoid this, it is important to have an impactful automation testing suite that provides good coverage like a regression side. This way, you not only relieve your testers from repeating the monotonous test cases but also give them enough bandwidth to find those critical edge cases which can help you move faster in the right direction.

While automation offers a chance to increase your product release velocity, there’s a catch – flaky tests. There might be times when something is working today but it might not work tomorrow. You may also come across false positives or false negatives or an inconsistent test suite which just adds up to your team’s bandwidth. Here are the major reasons for test flakiness-

- Script incompatibility with the preferred test framework.

- System breakdown due to probable incompatibility between various libraries and frameworks when put together.

- Operating system dependency.

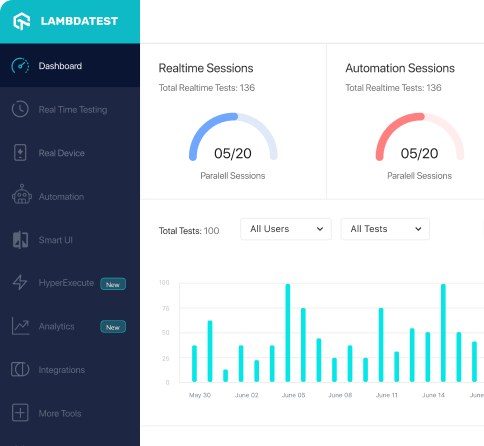

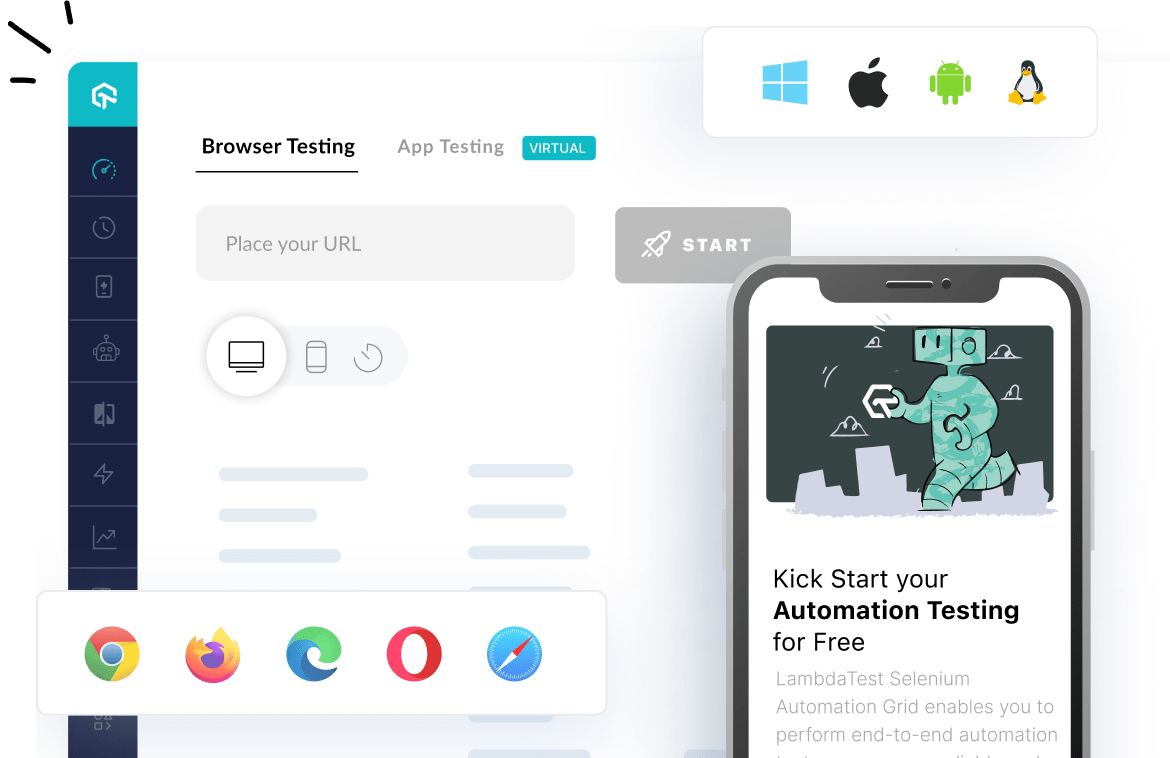

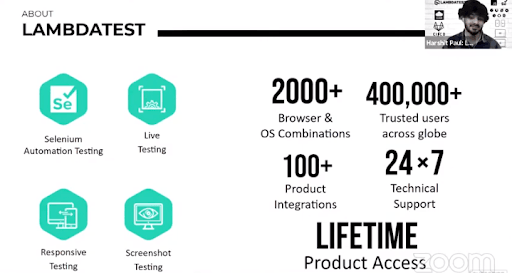

Harshit then went on to demonstrate how LambdaTest and OzCode can help avoid all these issues and thus accelerate your product release velocity. He demonstrated what LambdaTest does and the features it offers for your web testing needs.

After that, Idan gave an overview of OzCode and how to leverage the OzCode platform for live-debugging without making code changes on the server-side.

It’s the duality between LambdaTest and OZcode that makes it easy to increase your product release velocity as Ozcode would give you the insights of what happened on your server while LambdaTest gives you an army of devices to perform browser compatibility testing.

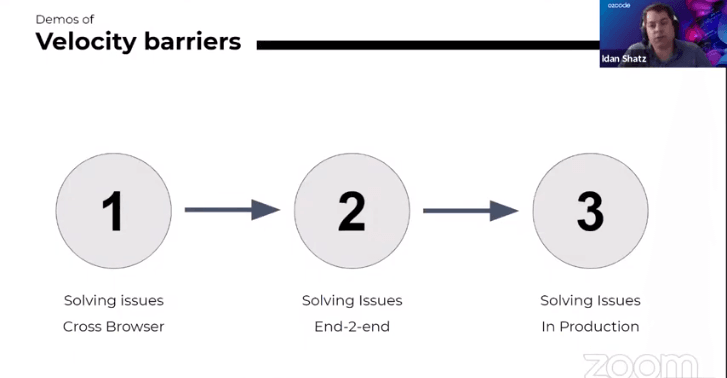

Together Harshit & Idan presented the demos of velocity barriers from the testing – stage to production environment.

The demos highlighted solving cross-browser issues, solving issues end-to-end (E2E), and solving issues in production. Finally, they talked about the best practices to boost the release velocity.

Subscribe to Coding Jag and get the best news around the testing world delivered to your inbox every Thursday morning.

Woo-hoo! Thanks for signing up for LambdaTest’s weekly newsletter!

Best Practices to Boost Release Velocity

Before jumping into the Q&A session, Harshit and Idan listed down the best practices for boosting product release velocity:

- Increase your browser test coverage to cater to a wide spectrum of users.

- Close communication gap between Devs & QAs.

- Leverage the potential of cloud (wherever possible).

- Don’t reproduce – record errors with code level observability.

- Follow Shift-left testing.

- Bug Prioritization – measure bug metrics + estimate effort.

- Keep your Staging environment closely identical to the Production environment, whenever possible.

- Capture & debug issues in production with built-in PII Redaction.

Q&A Session

Before wrapping up, Harshit & Idan answered a number of questions raised by the viewers. Here are some of the insightful questions:

Does Ozcode support all language compatibility?

Idan- Currently, we support C# features. We now have a private Beta for supporting Java language and by the end of the year, we’re aiming to have Python and OJS.

Can we upload Visual Studio automation script in LambdaTest dashboard and get it executed?

Harshit- You cannot upload automation scripts into the LambdaTest dashboard because what we provide is an execution platform and that’s actually the beauty of it. So you have all the code you need in your machine with the right IDE setup. You can run it from cloud-based IDEs and with GitPod, etc. Once you run it from any IDE or your own setup, it will pass that value to Selenium which is the end-point of test execution. All you need to do in your normal Selenium script is just define the username and access key which you will find on the LambdaTest dashboard to access the capabilities offered by the LambdaTest platform.

Under automation testing, do you provide frameworks with utilities for DB comparison, Excel read write etc.?

Harshit- If you’re referring to Behavior Driven Testing (BDD), you can surely do that with LambdaTest. If you have test data in an Excel sheet, you can perform data driven testing or behavior driven testing with Selenium, on the LambdaTest platform. All the test-automation frameworks compatible with Selenium are compatible with LambdaTest as well. You just need to use Remote Selenium WebDriver instead of local Selenium WebDriver, something that was demonstrated earlier.

Idan- What I really like about the entire setup is that LambdaTest enhances the capabilities of Selenium for improved test coverage. I believe your questions sound to me more like an integration test where you run some commands on your system and then you validate that the database is correct. So those two things would just work amazingly.

The only thing that I would like to suggest here is don’t use parallel testing because when you’re comparing the database, usually you need to have Setup and Teardown functions to maintain the database state machine properly. Hence, running those tests in parallel should improve the robustness of the tests . Besides, there is good integration between other third-party tools for doing this.

What happens if the front-end and back-end are developed using two different programming languages? Can Ozcode detect if it’s a back-end issue?

Idan- It doesn’t really matter what front-end language you’re using. If the Ozcode agent is supporting the back-end, it will just monitor all the exceptions that happen there automatically. If there is an issue or maybe if the user didn’t know about the occurrence of the issue (or exception), you will be able to see the list of those exceptions with full-time recording. The user can then dig deep to see what’s going on. Later on when Java comes onboard at the Ozcode platform, you will also be able to debug when you have a cluster that is written in different languages.

Hope You Enjoyed The Webinar!

I hope you liked the webinar. In case you missed it, please find the recording of the Webinar above. Make sure to share this article with anyone who wants to learn more about mobile-first testing. Stay tuned for more exciting webinars. You can also subscribe to our newsletter Coding Jag to stay on top of everything testing and more!

That’s all for now, happy testing!

Got Questions? Drop them on LambdaTest Community. Visit now