How to Generate pytest Code Coverage Report

Idowu (Paul) Omisola

Posted On: July 2, 2024

![]() 313384 Views

313384 Views

![]() 16 Min Read

16 Min Read

Code coverage is a metric to describe the degree to which the source code of an application is tested by a particular test suite. In the context of test automation, you can use different programming languages to measure code coverage, such as Python.

Python provides various testing frameworks like pytest that come with the ability to generate pytest code coverage reports for automated testing.

Let’s learn how to generate code coverage reports using the pytest framework.

TABLE OF CONTENTS

What is Code Coverage?

Code coverage is a simple statistic that measures the total lines of code that a test suite validates. It uses set metrics to calculate the total number of lines of code in your application source code that runs successfully in a test—typically expressed as a percentage.

Code coverage = (total code lines tested/total code lines subjected to testing)*100

For instance, testing a class containing 100 code lines in your source code involves subjecting the entire 100 lines of code to testing. If your test omits 40 lines of this class after testing, you can say your test suite has covered 60% of your code.

In that case, the number of actual code lines tested was 60, whereas the number of lines exposed to testing was 100. There might be bugs buried within the 40 omitted lines you don’t want to slip through to production. You want to improve your test and source code to increase coverage in that case.

Why pytest for Code Coverage Reports?

pytest has plugins and supported modules for evaluating code coverage. Here are some reasons you want to use pytest for code coverage report generation:

- It provides a straightforward approach for calculating coverage with a few lines of code.

- It gives comprehensive statistics of your code coverage score.

- It features plugins that can help you prettify pytest code coverage reports.

- It features a command-line utility for executing code coverage.

- It supports distributed and localized testing.

Note

NoteGet 100 Minutes of Automated Testing for FREE. Try LambdaTest Today!

pytest Code Coverage Reporting Tools

Here are some of the most used pytest code coverage tools.

coverage.py

The coverage.py library is one of the most-used pytest code coverage reporting tools. It’s a simple Python tool for producing comprehensive pytest code coverage reports in table format. You can use it as a command-line utility or plug it into your test script as an API to generate coverage analysis.

The API option is recommended if you need to prevent repeating a bunch of terminal commands each time you want to run the coverage analysis.

While its command line utility might require a few patches to prevent reports from getting muffed, the API option provides clean, pre-styled HTML reports you can view via a web browser.

Below is the command for executing code coverage with pytest using coverag.py:

|

1 |

coverage run -m pytest |

The above command runs all pytest test suites with names starting with “test.”

All you need to do to generate reports while using its API is to specify a destination folder in your test code. It then overwrites the folder’s HTML report in subsequent tests.

pytest-cov

pytest-cov is a code coverage plugin and command line utility for pytest. It also provides extended support for coverage.py.

Like coverage.py, you can use pytest-cov to generate HTML or XML reports in pytest and view a pretty code coverage analysis via the browser. Although using pytest-cov involves running a simple command via the terminal, the terminal command becomes longer and more complex as you add more coverage options.

For instance, generating a command-line-only report is as simple as running the following command:

|

1 |

pytest --cov |

The result of the pytest –cov command is shown below:

But generating an HTML report requires an additional command:

|

1 |

pytest --cov --cov-report=html:coverage_re |

Where coverage_re is the coverage report directory. Below is the report when viewed via the browser:

Here is a list of widely-used command line options with –cov:

| –cov options | Description |

|---|---|

| -cov=PATH | Measure coverage for a filesystem path. (multi-allowed) |

| –cov-report=type | To specify the type of report to generate. Specify the type of report to generate. Type can be HTML, XML, annotate, term, term-missing, or lcov. |

| –cov-config=path | Config file for coverage. Default: .coveragerc |

| –no-cov-on-fail | Do not report coverage if the test fails. Default: False |

| –no-cov | Disable coverage report completely (useful for debuggers). Default: False |

| –cov-reset | Reset cov sources accumulated in options so far. Mostly useful for scripts and configuration files. |

| –cov-fail-under=MIN | Fail if the total coverage is less than MIN. |

| –cov-append | Do not delete coverage but append to current. Default: False |

| –cov-branch | Enable branch coverage. |

| –cov-context | Choose the method for setting the dynamic context. |

Subscribe to the LambdaTest YouTube Channel and stay updated with the latest video tutorials on Selenium testing, Selenium Python, and more!

Demo: How to Generate pytest Code Coverage Report?

The demonstration for generating pytest code coverage includes a test for the following:

- A plain name tweaker class example to show why you may not achieve 100% code coverage and how you can use its result to extend your test reach.

- Code coverage demonstration for registration steps using the LambdaTest eCommerce Playground, executed on the cloud grid.

We’ll use Python’s coverage module, coverage.py, to demonstrate the code coverage for all the tests in this tutorial on the pytest code coverage report. So you need to install the coverage.py module since it’s third-party. You’ll also need to install the Selenium WebDriver (to access WebElements) and python-dotenv (to mask your secret keys).

If you are new to Selenium WebDriver, check out our guide on what is Selenium WebDriver.

Create a requirements.txt file in your project root directory and insert the following packages:

|

1 2 3 4 |

coverage selenium python-dotenv pytest |

Next, install the packages using pip:

|

1 |

pip install -r requirements.txt |

As mentioned, coverage.py lets you generate and write coverage reports inside an HTML file and view it in the browser. You’ll see how to do this later.

Name Tweaker Class Test

We’ll start by considering an example test for the name tweaking class to demonstrate why you may not achieve 100% code coverage. And you’ll also see how to extend your code coverage.

The name tweaking class contains two methods. One is for concatenating a new and an old name, while the other is for changing an existing name.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

class test_should_tweak_name: def __init__(self, name) -> None: self.name = name def test_should_addNames(self, name): if self.name == "LambdaTest": new_name = self.name+" "+name assert new_name == "LambdaTest Grid", "new_name should be LambdaTest Grid" return new_name else: return self.name def test_should_changeName(self, name): self.name = name assert self.name == "LambdaTest Cloud Grid", "new_name should be LambdaTest Cloud Grid" return name |

To execute the test and get code coverage of less than 100%, we’ll start by omitting a test case for the else statement in the first method and ignore the second method entirely (test_should_changeName).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# Import the Pytest coverage plugin: import coverage # Start code coverage before importing other modules: cov = coverage.Coverage() cov.start() # Main code to be covered----------: import sys sys.path.append(sys.path[0] + "/..") from plain_tests.plain_tests import test_should_tweak_name tweak_names = test_should_tweak_name("LambdaTest") print(tweak_names.test_should_addNames("Grid")) cov.stop() cov.save() cov.html_report(directory='coverage_reports') |

Run the test by running the following command:

|

1 |

run_coverage/name_tweak_coverage.py |

Go into the coverage_reports folder and run index.html via your browser. The test yields 69% coverage (as shown below) since it omits the two named instances.

Let’s extend the code coverage.

Although we deliberately ignored the second method in that class, it was easy to forget to include a case for the else statement in the test. That’s because we only focused on validating the true condition. Including a test case that assumes negativity (where the condition returns false) extends the code coverage.

So what if we add a test case for the second method and another one that assumes that the provided name in the first method isn’t LambdaTest?

The code coverage yields 100% since we’re considering all possible scenarios for the class under test.

So, a more inclusive test looks like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

# Import the Pytest coverage plugin: import coverage # Start code coverage before importing other modules: cov = coverage.Coverage() cov.start() # Main code to be covered----------: import sys sys.path.append(sys.path[0] + "/..") from plain_tests.plain_tests import test_should_tweak_name tweak_names = test_should_tweak_name("LambdaTest") will_not_tweak_names = test_should_tweak_name("Not LambdaTest") print(tweak_names.test_should_addNames("Grid")) print(tweak_names.test_should_changeName("LambdaTest Cloud Grid")) print(will_not_tweak_names.test_should_addNames("Grid")) # Stop code coverage and save the output in a reports directory---------: cov.stop() cov.save() cov.html_report(directory='coverage_reports') |

Adding the will_not_tweak_names variable covers the else condition in the test. Additionally, calling test_should_changeName from the class instance captures the second method in that class.

Extending the coverage this way generates 100% code coverage, as seen below:

Code Coverage on the Cloud Grid

We’ll use the previous code structure to implement the code coverage on the cloud grid. Here, we’ll write test cases for the registration actions on the LambdaTest eCommerce Playground. Then, we’ll perform pytest testing on cloud-based testing platforms like LambdaTest.

Tests will be run based on the registration actions without providing some parameters. This might involve failure to fill in some form fields or submitting an invalid email address.

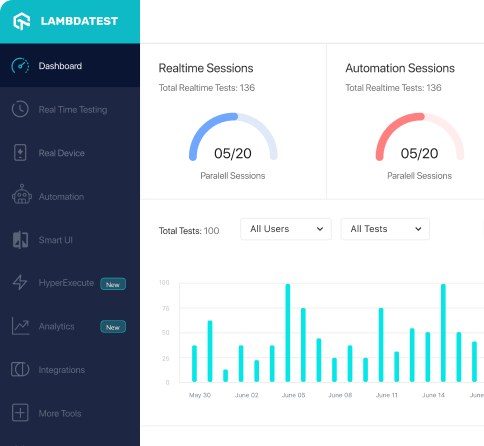

LambdaTest is an AI-powered test execution platform that enables you to conduct Python web automation on a dependable and scalable online Selenium Grid infrastructure, spanning over 3000 real web browsers and operating systems.

Test Scenario 1:

| Submit the registration form with an invalid email address and missing fields. |

Test Scenario 2:

| Submit the form with all fields filled appropriately (successful registration). |

We’ll also see how adding the missing parameters can extend the code coverage. Here is our Selenium automation script:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

from selenium import webdriver from dotenv import load_dotenv import os load_dotenv('.env') LT_GRID_USERNAME = os.getenv("LT_GRID_USERNAME") LT_ACCESS_KEY = os.getenv("LT_ACCESS_KEY") desired_caps = { 'LT:Options' : { "user" : os.getenv("LT_GRID_USERNAME"), "accessKey" : os.getenv("LT_ACCESS_KEY"), "build" : "Test Coverage Idowu", "name" : "Firefox coverage demo2", "platformName" : os.getenv("TEST_OS") }, "browserName" : "FireFox", "browserVersion" : "125.0", } gridURL = "https://{}:{}@hub.lambdatest.com/wd/hub".format(LT_GRID_USERNAME, LT_ACCESS_KEY) class testSettings: def __init__(self) -> None: self.driver = webdriver.Remote(command_executor=gridURL, desired_capabilities= desired_caps) def testSetup(self): self.driver.implicitly_wait(10) self.driver.maximize_window() def tearDown(self): if (self.driver != None): print("Cleaning the test environment") self.driver.quit() |

Code Walkthrough:

First, import the Selenium WebDriver to configure the test driver. Get your grid username and access key (passed as LT_GRID_USERNAME and LT_GRID_ACCESS_KEY, respectively) from Settings > Account Settings > Password & Security.

The desired_caps is a dictionary of the desired capabilities for the test suite. It details your username, access key, browser name, version, build name, and platform type that runs your driver.

Next is the gridURL. We access this using the access key and username declared earlier. We then pass this URL and the desired capability into the driver attribute inside the __init__ function.

Write a testSetup() method that initiates the test suite. It uses the implicitly_wait() function to pause for the DOM to load elements. It then uses the maximize_window() method to expand the chosen browser frame.

However, the tearDown() method helps stop the test instance and closes the browser using the quit() method.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 |

from selenium.webdriver.common.by import By from selenium.common.exceptions import NoSuchElementException class element_locator: first_name = "//input[@id='input-firstname']" last_name = "//input[@id='input-lastname']" email = "//input[@id='input-email']" telephone = "//input[@id='input-telephone']" password = "//input[@id='input-password']" confirm_password = "//input[@id='input-confirm']" subscribe_no = "//label[@for='input-newsletter-no']" agree_terms = "//label[@for='input-agree']" submit = "//input[@value='Continue']" error_message = "//div[@class='text-danger']" locator = element_locator() class registerUser: def __init__(self, driver) -> None: self.driver=driver def error_message(self): try: return self.driver.find_element(By.XPATH, locator.error_message).is_displayed() except NoSuchElementException: print("All code in registration test covered") def test_getWeb(self, URL): self.driver.get(URL) def test_getTitle(self): return self.driver.title def test_fillFirstName(self, data): self.driver.find_element(By.XPATH, locator.first_name).send_keys(data) def test_fillLastName(self, data): self.driver.find_element(By.XPATH, locator.last_name).send_keys(data) def test_fillEmail(self, data): self.driver.find_element(By.XPATH, locator.email).send_keys(data) def test_fillPhone(self, data): self.driver.find_element(By.XPATH, locator.telephone).send_keys(data) def test_fillPassword(self, data): self.driver.find_element(By.XPATH, locator.password).send_keys(data) def test_fillConfirmPassword(self, data): self.driver.find_element(By.XPATH, locator.confirm_password).send_keys(data) def test_subscribeNo(self): self.driver.find_element(By.XPATH, locator.subscribe_no).click() def test_agreeToTerms(self): self.driver.find_element(By.XPATH, locator.agree_terms).click() def test_submit(self): self.driver.find_element(By.XPATH, locator.submit).click() |

Start by importing the Selenium By object into the file to declare the locator pattern for the DOM. We’ll use the NoSuchElementException to check for an error message in the DOM (in case of invalid inputs).

Next, declare a class to hold the WebElements. Then, create another class to handle the web actions for the registration form.

The element_selector class contains the WebElement locations. Each uses the XPath locator.

The registerUser class accepts the driver attribute to initiate web actions. You’ll get the driver attribute from the setup class while instantiating the registerUser class.

The error_message inside the registerUser class does two things. First, it checks for invalid field error messages in the DOM when the test tries to submit the registration form with unacceptable inputs. The check runs every time inside a try block. So, the test covers it regardless.

Secondly, it runs the code in the try block if it finds an input error message element in the DOM. This prevents the except block from running, flagging it as non-covered code.

Otherwise, Selenium raises a NoSuchElementException. This forces the test to log the print in the except block and mark it as covered code. This feels like a reverse strategy. But it helps code coverage capture more scenarios.

So, besides capturing omitted fields (web action methods not included in the test execution), it ensures that the test accounts for an invalid email address or empty string input.

Thus, if the error message is displayed in the DOM, the method returns the error message element. Otherwise, Selenium raises a NoSuchElementException, forcing the test to log the printed message.

The rest of the class methods are web action declarations for the locators in the element_locator class. Excluding the fields that require a click action, the other class methods accept a data parameter, a string that goes into the input fields.

But first, create a test runner file for the code coverage scenarios. You’ll execute this file to run the test and calculate the code coverage.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# Import the Pytest coverage plugin: import coverage # Start code coverage before importing other modules: cov = coverage.Coverage() cov.start() # Main code to be covered----------: import sys sys.path.append(sys.path[0] + "/..") from testscenario.scenarioRun import test_registration registration = test_registration() registration.it_should_register_user() # Stop code coverage and save the output in a reports directory---------: cov.stop() cov.save() cov.html_report(directory='coverage_reports') |

The above code starts by importing the coverage module. Next, declare an instance of the coverage class and call the start() method at the top of the code. Once code coverage begins, import the test_registration class from scenarioRun.py. Instantiate the class as registration.

The class method, it_should_register_user, is a test method that executes the test case (you’ll see this class in the next section). Use cov.stop() to close the code coverage process. Then, use cov.save() to capture the coverage report.

The cov.html_report() method writes the coverage results into an HTML file inside the declared directory (coverage_reports).

And running this file executes the test and coverage report.

Now, let’s tweak the web action methods inside scenarioRun.py to see the difference in code coverage for each scenario.

Test Scenario 1: Submit the registration form with an invalid email address and some missing fields.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 |

import sys sys.path.append(sys.path[0] + "/..") from locators.locator import registerUser from setup.setup import testSettings import unittest from dotenv import load_dotenv import os load_dotenv('.env') setup = testSettings() test_register = registerUser(setup.driver) E_Commerce_palygroud_URL = "https:"+os.getenv("E_Commerce_palygroud_URL") class test_registration(unittest.TestCase): def it_should_register_user(self): setup.testSetup() test_register.test_getWeb(E_Commerce_palygroud_URL) title = test_register.test_getTitle() self.assertIn("Register", title, "Register is not in title") test_register.test_fillEmail("testrs@gmail") test_register.test_fillPhone("090776632") test_register.test_fillPassword("12345678") test_register.test_fillConfirmPassword("12345678") test_register.test_submit() test_register.error_message() setup.tearDown() |

Pay attention to the imported built-in and third-party modules. We start by importing the registerUser and testSettings classes we wrote earlier. The testSettings class contains the testSetup() and tearDown() methods for setting up and closing the test, respectively. We instantiate this class as a setup.

As seen below, the registerUser class instantiates as test_register using the setup.driver attribute. The dotenv package lets you get the test website’s URL from the environment variable.

The testSetup() method initiates the test case (it_should_registerUser method) and prepares the test environment. Next, we launch the website using the test_getWeb() method. This accepts the website URL declared earlier. The inherited property from the unittest test, assertIn, checks whether the declared string is in the title. Use the setup.tearDown() method to close the browser and clean the test environment.

As earlier stated, the rest of the test case omits some methods from the registerUser class to see its effect on code coverage.

Test Execution:

To execute the test and code coverage, go into the test_run_coverage folder and run the run_coverage.py file using pytest:

|

1 |

pytest |

Once the code runs successfully, open the coverage_reports folder and open the index.html file via a browser. The code coverage reads 94%, as shown below.

Although the other test files read 100%, locator.py covers 89% of its code, reducing the overall score to 94%. We omitted some web actions and entered an invalid email address while running the test.

Opening locator.py gives more insights into the missing steps (highlighted in red), as shown below.

Although you might expect the coverage to flag the test_fillEmail() method, it doesn’t because the test provided an email address; it was only invalid. The except block is the invalid parameter indicator. And it only runs if the input error message element isn’t in the DOM.

As seen, the test flags the except block this time since the input error message appears in the DOM due to invalid entries.

The test suite runs on the cloud grid with some red flags in the test video, as shown below.

Test Scenario 2: Submit the form with all fields filled appropriately (successful registration).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

import sys sys.path.append(sys.path[0] + "/..") from locators.locator import registerUser from setup.setup import testSettings import unittest from dotenv import load_dotenv import os load_dotenv('.env') setup = testSettings() test_register = registerUser(setup.driver) E_Commerce_palygroud_URL = "https:"+os.getenv("E_Commerce_palygroud_URL") class test_registration(unittest.TestCase): def it_should_register_user(self): setup.testSetup() test_register.test_getWeb(E_Commerce_palygroud_URL) title = test_register.test_getTitle() self.assertIn("Register", title, "Register is not in title") test_register.test_fillFirstName("Idowu") test_register.test_fillLastName("Omisola") test_register.test_fillEmail("testrs@gmail.com") test_register.test_fillPhone("090776632") test_register.test_fillPassword("12345678") test_register.test_fillConfirmPassword("12345678") test_register.test_subscribeNo() test_register.test_agreeToTerms() test_register.test_submit() test_register.error_message() setup.tearDown() |

Test Scenario 2 has a code structure and naming convention similar to Test Scenario 1. However, we’ve expanded the test reach to cover all test steps in Test Scenario 2. Import the needed modules as in the previous scenario. Then instantiate the testSettings and registerUser classes as setup and test_register, respectively.

To get an inclusive test suit, ensure that you execute all the test steps from the registerUser class, as shown below. We expect this to generate 100% code coverage.

Test Execution:

Go into the run_coverage folder and run the pytest command to execute the test_run_coverage.py file:

|

1 |

pytest |

Open the index.html file inside the coverage reports via a browser to see your pytest code coverage report. It’s now 100%, as shown below. This means the test doesn’t omit any web action.

Below is the test suite execution on the cloud grid:

Conclusion

Manually auditing your test suite can be an uphill battle, especially if your application code base is large. While performing Selenium Python testing, leveraging a dedicated code coverage tool boosts your productivity, as it helps you flag untested code parts to detect potential bugs easily. Although you’ll still need human inputs to decide your test requirements and coverage, performing a code coverage analysis gives you clear direction.

Frequently Asked Questions (FAQs)

What is code coverage in pytest?

Code coverage in pytest measures the amount of Python code executed while the tests run. It helps identify which parts of your codebase have not been tested and thus might contain hidden bugs.

How to get Python code coverage?

To get code coverage in Python, you can use the pytest-cov plugin. Install it via pip (pip install pytest-cov), and then run your tests with pytest –cov=your_package_name to generate a coverage report.

How to increase test coverage in pytest?

To increase test coverage in pytest:

- Identify untested code parts using coverage reports.

- Write additional tests for those parts, focusing on edge cases and error handling.

- Continuously review and refactor tests to improve coverage metrics and test suite quality.

Got Questions? Drop them on LambdaTest Community. Visit now